You publish an AI draft and the metrics feel flat.

Time on page is low, shares are scarce, and the tone sounds off.

That mismatch isn’t imagination.

A 2025 Content Marketing Institute survey found 63% of marketers reported challenges with AI-generated content, particularly keeping authenticity and brand voice.

Some problems are artistic.

AI often misses subtlety and emotional depth, which damages audience connection. Algorithmic bias also creeps into messaging.

Skewed training data can make content feel exclusionary or tone-deaf.

Legal and privacy pitfalls are rising.

In 2025, 58% of businesses encountered legal challenges with AI usage in marketing, mostly about data privacy and compliance.

Platform tools such as HubSpot and Jasper AI show clear progress and clear limits.

They speed production but also surface common AI content marketing challenges that teams must manage.

Ignoring these limitations puts brand trust, compliance, and marketing budgets at real risk.

Table of Contents

Opening diagnosis: Do we really trust AI to carry our content strategy?

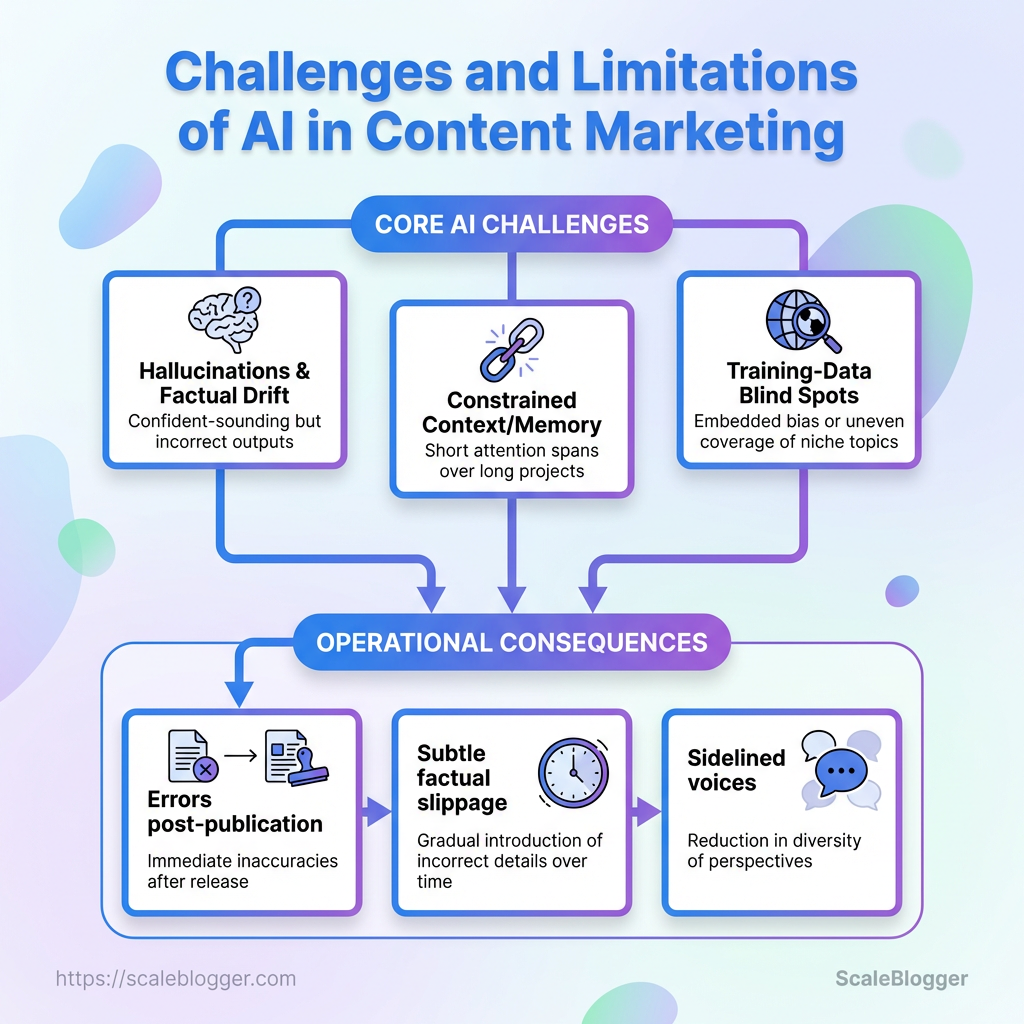

Trusting AI to own a content strategy feels like handing over the keys to a smart but mercurial intern.

Many teams assume automation will scale voice, save time, and eliminate writer’s block.

Reality usually looks messier: outputs can drift from brand tone, repeat biases, or miss the human emotional cues that spark engagement.

This section diagnoses that gap. It contrasts common assumptions with observed problems. It also sets the scope: practical signals to watch, three recurring pain points creators report, and what this explainer will cover next.

Start with what tech-savvy creators are actually telling us.

Quality drift: AI can produce polished copy fast, but creators say it often lacks nuance and originality.

Tools like Jasper AI have faced criticism for outputs that read generic rather than deeply human.

Voice erosion: Brands report difficulty keeping a consistent

brand voicewhen multiple AI prompts and templates are used across teams.Compliance and privacy headaches: Creators worry about data handling and legal exposure when models ingest customer information.

GDPRcompliance and related rules complicate personalization.

63% of marketers reported facing challenges with AI-generated content in a 2025 survey by the Content Marketing Institute, particularly around maintaining authenticity and brand voice.

58% of businesses in 2025 encountered legal challenges with AI usage in marketing, primarily regarding data privacy issues and compliance.

Those numbers show this isn’t theoretical — it’s already affecting strategy decisions.

They underscore common limitations of AI in marketing and real AI content pitfalls teams run into when they expect a push-button solution.

Imagine a fintech brand that uses AI to draft policy summaries.

The drafts read fine but miss key regulatory caveats.

That gap creates risk and extra review cycles, which erases the time savings AI promised.

Platforms from HubSpot automate content creation and analytics, and they help scale personalized workflows.

Yet automation should sit inside a governance layer that enforces voice, editorial standards, and legal checks.

Trusting AI doesn’t mean removing humans.

It means redefining roles: AI as engine, humans as stewards.

Keep the steering wheel in human hands.

Core technical limitations of AI models

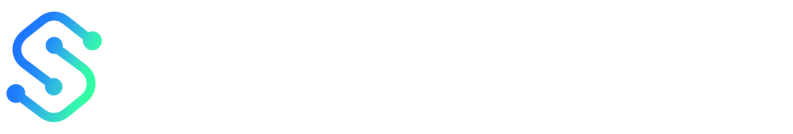

AI models deliver rapid drafts and scale, but they trip over three structural limits that matter for content strategy: hallucinations and factual drift, constrained context/memory, and training-data blind spots that embed bias or gaps.

These are not quirky bugs; they come from how models are trained and how they predict text.

Expect confident-sounding outputs that can be wrong, short attention spans across long projects, and uneven coverage of niche or emerging topics.

Teams that ignore these limits end up chasing errors after publication.

The problem shows up as subtle factual slippage, personalization that loses thread, or content that systematically sidelines certain voices.

Those are technical problems with operational consequences — and they require engineering plus editorial guardrails, not just prompts.

Designing for those limits changes how content flows get built.

Rather than treating the model as the author, treat it as a specialist that drafts, cites, and feeds into verification steps.

That mindset reduces many AI content marketing challenges and avoids common AI content pitfalls.

Model hallucinations and factual drift — causes and signals

Hallucinations stem from the training objective: predicting plausible continuations, not guaranteeing truth.

Stale or incomplete training data amplifies drift over time.

When models chain prompts without grounding, invented specifics appear.

Watch for these signals before publishing:

Confident specificity: model invents dates, studies, or quotes without source.

Inconsistent facts: earlier sentences contradict later ones.

Unverifiable claims: numbers or case details that resist quick checking.

Citation hallucinations: fake papers, journals, or URLs presented as evidence.

Tone–fact mismatch: florid language masking shallow substance.

Mitigate with retrieval-augmented generation, verification layers, and human fact-checkers.

Automate checks that flag unverifiable assertions and require source attachments before publishing.

Context and memory constraints in content flows

Most models have finite context windows.

They forget earlier prompts or prior-article threads once the window fills.

That disrupts multi-article series, long-form narratives, and persistent personalization.

Practical fixes include external memory stores: versioned briefs, vector embeddings for past content, and canonical briefs loaded into each generation.

For personalization, keep user state in a database and inject only the minimal, verified slice into prompts.

HubSpot-style AI features can accelerate personalization, but they rely on robust state management to avoid context loss.

Training data blind spots and bias implications

Training corpora reflect what was available and amplified.

That creates blind spots in niche domains and perpetuates cultural or demographic bias.

Jasper AI has been criticized for outputs that lack depth or human nuance, a symptom of those limits.

Audit datasets regularly, add targeted fine-tuning, and insert counterfactual examples in training.

Remember the risk isn’t only reputation — 58% of businesses reported legal challenges with AI in marketing in 2025, often around privacy and compliance.

And 63% of marketers in 2025 said authenticity and brand voice were pain points with AI content. Hallucination: A fluent but factually incorrect model output. Context window: The model’s limited token memory for prompts and recent text. Training-data bias: Systematic omission or skew in the examples models learned from.

Treat models as tools with borders.

Build verification, memory, and bias-audit steps into your content pipeline so AI becomes an accelerant, not a liability.

Content-quality pitfalls that impact SEO and engagement

Most AI drafts look polished at first glance but often lack the layered evidence and argument that search engines and engaged readers reward.

A readable sentence and a tidy structure don’t guarantee topical authority, original research, or links to primary sources — all things that help pages climb rankings and keep visitors on the page.

That gap explains a lot of the frustration teams report when wrestling with AI content marketing challenges.

Content can pass a human quick-scan yet fail to satisfy query intent, produce backlinks, or reduce pogo-sticking because it never digs past surface-level claims. Fixing that requires treating AI as a drafting engine, not a finished product.

You need guardrails for depth, deliberate checks for voice, and tests that catch repetitive phrasing and template artifacts before publishing.

Surface fluency vs. depth: why content can read well but fail to rank

Attribute | AI-generated (no human edit) | Human-written | AI-generated with human edit |

|---|---|---|---|

Factual accuracy | Variable; factual errors and hallucinations common | High when researched; depends on writer skill | Much improved; editor corrects errors and adds sources |

Topical depth | Shallow coverage of related subtopics | Deep, often includes original angles and micro-topics | Deepened with targeted research and added subtopics |

Original insights | Rare; tends to remix existing text | Common; original viewpoints and experience-based insights | Frequent; editor adds unique analysis and anecdotes |

Speed to publish | Very fast; near-instant drafts | Slow; research and drafting take time | Fast-to-moderate; draft speed preserved, review adds time |

SEO friendliness | Basic keyword use; may miss semantic coverage | Intent-driven structure and internal linking | Strong; combines AI suggestions with editorial SEO tuning |

Scalability | Extremely high; content volume increases quickly | Limited by human resources | Scalable with controlled quality via editorial workflows |

Readability | Fluent, consistent tone | Varied tone tailored to audience | Fluent with tailored tone corrections |

Engagement potential | Low–medium; may lack hooks or novel examples | High when narrative and examples used | Higher when editor injects stories and CTAs |

Risk of bias | Carries training-data biases | Lower if writer is aware and diverse | Reduced when editor audits for bias |

Maintenance cost | Low per item but risk of correction backlog | Higher due to bespoke content | Moderate; editing front-loads cost but lowers rework |

Updateability | Easy to regenerate, may repeat mistakes | More effort but controlled updates | Easier with human oversight to ensure accuracy |

Legal / compliance risk | Higher; privacy or copyright issues possible | Lower if research and sourcing follow rules | Lower when editor verifies licenses and compliance |

AI drafts win at speed and surface fluency, but the rows above show why search engines reward the human layer.

Combining AI scale with editorial rigor yields the best balance of rankability and engagement.

Surface fluency vs. depth Readers and search engines reward original, evidence-backed content over paraphrased summaries.

AI can generate clear prose but often skims subtopics and misses primary sources.

That makes content vulnerable to low dwell time and weak backlink profiles.

Audit depth: evaluate whether each section cites primary sources or adds unique data.

Add primary material: inject interviews, charts, or original examples to establish authority.

Layer references: include linked sources and short annotations for each claim.

Tone and brand voice erosion Scale amplifies small voice shifts into readable but inauthentic copy.

When dozens of AI drafts get published, brand voice fragments and readers notice subtle inconsistencies.

Voice guide: document 6–8 tonal rules (sentence length, idioms, humor level).

Editorial pass: assign an editor to enforce brand voice on every AI draft.

Voice QA: sample published posts monthly and score for drift.

Repetitiveness and pattern artifacts AI often repeats phrasing, structural patterns, and common transitions that bore readers and trigger engagement drops.

Pattern artifacts also reduce perceived expertise.

Diversity edits: replace repeated sentence openers and CTAs with varied alternatives.

Read-aloud check: scan for repeated rhythms and restructure sections.

A/B test headlines and intros to detect which patterns degrade click-through and time-on-page.

Human oversight is the defense against the limitations of AI in marketing.

Treat models as accelerants, not shortcuts, and build an editorial loop that prioritizes depth, voice, and variety.

For teams building that loop, consider platforms that integrate draft generation with editorial workflows, such as Scaleblogger.

Operational and workflow challenges

Operational limits of AI become clear when teams find that despite rapid drafts, integration into editorial workflows creates unforeseen challenges.

Many marketers struggle with issues surrounding authenticity and brand voice, as highlighted by recent data showing a considerable portion encountered legal challenges tied to AI use.

To successfully integrate AI, it’s crucial to address these operational hurdles head-on, rather than just speeding through production.

Ethical, legal and brand-safety risks

AI can draft fast, but fast content carries hidden liabilities that human editors often miss.

Legal exposure, muddy provenance, and brand-safety failures show up long after a post goes live.

Treat this as risk management, not just quality control.

That means records, clear ownership, explicit disclosures, and a chain of human approvals tied to policy.

Copyright, training-data provenance and content ownership

Copyright questions land first when AI uses scraped or licensed material without clear provenance.

Companies need to know what data fed the model and whether any output reproduces protected expression. Copyright: Determine whether generated material contains copyrighted elements and who holds infringement risk. Training-data provenance: Maintain records showing datasets, licensing status, and third-party models used. Content ownership: Clarify in vendor contracts whether output is assigned to your company or remains subject to vendor/licensor claims. These are not academic points.

If a vendor model was trained on copyrighted news or user-submitted images, downstream posts may carry exposure that requires legal review.

Disclosure, audience trust and reputational consequences

Audiences notice when voice and depth feel manufactured.

In a 2025 Content Marketing Institute survey, 63% of marketers reported trouble keeping AI content authentic and aligned with brand voice.

That erosion of trust can become a reputation problem when errors spread.

Jasper AI, for example, has faced criticism for producing marketing copy that reads generic, which fuels skepticism among savvy audiences.

HubSpot’s in-product AI features show how mainstream this has become—and how quickly expectations shift when automation is involved.

Human sign-off: Require named editorial approval before publication.

Visible disclosure: Use brief, clear labels when significant automation created the content.

Brand-safety filters: Maintain lists of banned topics, sensitive words, and off-limits claims.

Regulatory exposure and practical mitigation tactics

Legal risk is real: 58% of businesses surveyed in 2025 reported facing legal challenges tied to AI use in marketing, often around data privacy and compliance with laws like GDPR.

Conduct a data protection impact assessment (DPIA) for any system that personalizes content.

Include IP assignment and indemnity clauses in vendor agreements.

Keep immutable logs of prompts, model versions, and the training-data provenance you can prove.

Implement watermarking or metadata tags to mark AI-origin content where possible.

Train legal and editorial teams together so review is rapid and consistent.

Good governance reduces surprises and keeps legal exposure manageable.

It also preserves the trust that makes content valuable.

📥 Download: Download Template (PDF)

Measuring impact: attribution, metrics, and benchmarking

Can you trust pageviews to tell whether an AI draft actually helped your business? Most teams treat vanity metrics as proof, then learn the hard way that volume doesn’t equate to value.

A reliable measurement approach treats content as an experiment: define business outcomes first, then map metrics that meaningfully connect AI-driven production to those outcomes.

That means mixing short-term engagement signals with longer-term conversion and editorial-effort measures.

Standard analytics need interpretation layers when AI is involved.

Raw traffic spikes can hide poor retention or heavy editorial rework.

Conversion lifts that follow content pushes may be driven by distribution changes, not better writing.

What follows is a practical playbook for attribution, experiment design, and a recommended KPI set you can start tracking this week.

Why standard metrics can mislead with AI-generated drafts

AI-first drafts often inflate surface metrics while failing deeper signals.

A page can attract clicks but deliver short engaged time and many revisions.

Misread signal: High sessions with low engaged time can indicate clickbait headings rather than useful content.

Confounded attribution: Campaign-level boosts (email, paid social) can masquerade as organic improvement if UTM tagging or holdouts aren’t set.

Hidden cost: A low initial cost-per-article from AI can be offset by high editorial revision rates tracked only in CMS logs.

Remember that 63% of marketers reported problems maintaining authenticity and brand voice with AI-generated content in 2025, and 58% of businesses reported legal challenges related to AI and data privacy that affect personalization strategies that feed into metrics.

Designing experiments: A/B tests, holdouts, and quality gates

Treat content changes like product changes: run controlled tests with clear success criteria.

Define primary business metric (e.g., trial signups or content-assisted conversions).

Randomize traffic with an A/B split or use geographic/segment holdouts for distribution channels.

Run tests long enough for statistical power; prioritize effect size over tiny p-values.

Add a quality gate where editorial rework is measured; treat revision rate as a negative outcome.

This short walkthrough shows how to route traffic, tag variants, and log editorial rework so you avoid false positives.

Suggested KPI set and reporting cadence for continuous benchmarking

Below is a practical KPI matrix combining GA4, CMS logs, and editorial dashboards.

Suggested KPI set and reporting cadence for continuous benchmarking

KPI | What it measures | Recommended tool/method | Reporting frequency |

|---|---|---|---|

Organic sessions | Volume of organic visits to content |

| Weekly |

Click-through rate (SERP) | Search result attractiveness | Google Search Console + SERP tracking (Ahrefs/SEMrush) | Weekly |

Engaged time | Time users actively spend on page |

| Weekly |

Revision rate (editorial rework) | Amount of post-publish editing per piece | CMS revision logs + editorial dashboard | Biweekly |

Conversion per content piece | Direct conversions attributable to content |

| Biweekly |

Return visits | Audience retention and loyalty |

| Monthly |

Assisted conversions | Content’s role in multi-touch paths |

| Monthly |

Bounce rate by landing type | Misalignment between headline and content |

| Weekly |

Content ROI | Revenue (or goal value) minus production/edit costs | CRM (HubSpot) + cost tracker | Quarterly |

AI-detection / quality score | Editorial assessment of AI-origin and quality | Internal QA scorecard + | Biweekly |

Those KPIs combine analytics platforms (GA4, Search Console), third-party SEO tools, CMS revision logs, and CRM data as recommended.

Mixing behavioral metrics with editorial-effort and business outcomes prevents false positives.

Use the table to create dashboards that show both immediate engagement and hidden costs like revisions.

Measuring AI-driven content means pairing short-term signals with longer-term business outcomes and clear experiments.

That discipline separates lucky spikes from repeatable improvement.

Practical mitigation strategies and team practices

Imagine a weekly editorial review where every AI draft hits the CMS and the team notices the same shallow arguments and tonal drift.

That meeting should become the engine for disciplined checks, not a place to grumble.

Practical, repeatable practices reduce the risk that those drafts undermine SEO, brand voice, or legal safety.

Start by building explicit, machine-readable guardrails and human workflows that are easy to follow.

Small investments — a living prompt library, a short checklist for factual sourcing, a standard content brief template — buy a lot of downstream certainty and speed.

These practices address common AI content marketing challenges while keeping humans firmly in control.

63% — of marketers reported challenges with AI-generated content in a 2025 Content Marketing Institute survey, especially around authenticity and voice.

58% — of businesses in 2025 reported legal challenges tied to AI usage, mostly data privacy and compliance.

Editorial guardrails: checklists, style guides, and prompt libraries

Make a compact style guide that fits on one page and a one-paragraph content brief template.

The brief should note target audience, citation standard, angle, and three mandatory sources.

Source checklist: Require at least two primary sources and one industry authority per long-form piece.

Voice anchors: Provide three short example sentences that capture brand tone and a

do/notlist for language.Prompt library: Store vetted

prompttemplates with expected outputs and failure-mode notes.Citation policy: Define when to attach links, when to attach author attribution, and the minimum verification step.

Revision tags: Use metadata tags like

needs-factcheckandlegal-reviewto route drafts quickly.

Human-in-the-loop patterns that scale quality

Automate repeatable steps, but design gates where people add judgment.

That hybrid flow keeps throughput high without surrendering editorial control.

Prioritize: Use an editorial queue to mark pieces by business impact and risk (high/medium/low).

Triage and fix: Low-risk pieces go to a single editor; high-risk pieces go to a subject-matter reviewer plus legal.

Publish and monitor: Post-publish, assign a reviewer to monitor metrics and flagged feedback for 30 days.

Mention tools like HubSpot for workflow automation and Jasper AI for generation, but treat them as parts of the pipeline, not full owners.

Testing and feedback loops: small experiments to reduce risk

Run fast A/B-style experiments that control variables: prompt version, editorial depth, and evidence density.

Keep tests short and focused.

Micro-experiments: Run 2–4 week tests comparing a human-edited AI draft versus a human-first draft.

Quality scorecard: Rate pieces on factual accuracy, depth, and audience reaction; keep scores in a shared dashboard.

Postmortems: After a failed experiment, document causes and update the prompt library and checklist.

Start small, iterate, and lock successful patterns into templates and automation.

That way, the team gains speed without sacrificing the trust readers and regulators demand.

Examples and short case diagnostics

Real-world failures teach faster than theory.

Below are two compact cases that show how AI-driven content can either crater metrics or scale output without killing voice.

Each case includes rapid diagnostics and specific fixes you can use that same week.

These examples draw on patterns seen across tools like Jasper AI and platform features found in HubSpot, plus industry data.

Remember: a 2025 survey found 63% of marketers struggled with authenticity and brand voice in AI-generated content, and 58% of businesses reported legal or compliance issues tied to AI use in 2025.

Those figures explain why the incidents below are common.

Micro-case: AI content that tanked SEO — and how we recovered

A publication rolled out 400 AI-first posts to chase long-tail keywords.

Organic traffic fell for core topics within six weeks.

The immediate problem was content cannibalization and low topical authority.

Run a content-quality audit by traffic cohort.

Compare pages published in the AI push vs earlier winners.

Look for falling impressions, rapid SERP drops, and increased bounce rates.

Identify cannibalized topics and group them into content clusters.

Choose a single cornerstone piece and plan consolidation.

Merge low-value pages into the chosen cornerstone.

Use

301redirects for removed URLs and addrel="canonical"where consolidation isn’t possible.Inject human-led expertise: add interviews, original data, or unique frameworks to the cornerstone.

Re-submit updated sitemaps and monitor indexed changes in the next 2–6 weeks with weekly checks.

This sequence stops the bleeding fast and rebuilds topical authority without a full rewrite of every page.

Micro-case: improving throughput without sacrificing voice

Many teams pair automated drafting with a lightweight human process to keep voice intact.

Tools like HubSpot can generate personalized elements, while draft tools (commonly Jasper AI) produce first-pass copy that humans refine.

Define voice in 1 page: outline tone, banned phrases, and 3 example paragraphs.

Use AI for structure, not claims: generate outlines, meta descriptions, and A/B headings.

Human finishers: assign a single editor per content stream to inject nuance, sources, and storytelling.

This preserves throughput while keeping content distinct and defensible.

Quick checklist: readiness questions before expanding AI use

Editorial ownership defined: Is there a named editor who signs off on every AI draft?

Audit cadence in place: Can you audit a sample of new pages weekly for the first 90 days?

Legal review ready: Are privacy and IP risks checked for generated content pipelines?

Voice rulebook exists: Is there a one-page style guide every writer and prompt-writer follows?

Measurement plan set: Do you track cohort-level organic performance, not just raw pageviews?

Scaling AI without these checks raises the odds of the two failures above.

Treat these questions as a kill-switch: if three or more are unanswered, pause the expansion and fix the gaps.

Conclusion

Make AI work for your audience, not the other way around

You publish an AI draft and the metrics feel flat — low time on page, few shares, and a tone that misses the mark.

That moment captures the article’s central insight: scale without human judgment often produces noise, not resonance. Most AI content marketing challenges trace back to gaps in intent alignment, shallow topical depth, and broken workflows rather than the model itself.

Technical quirks like factual drift, generic phrasing, and brittle reasoning illustrate the limitations of AI in marketing and explain many common AI content pitfalls.

Operational failures — no editor checkpoint, poor A/B testing, or mis-tagged analytics — turn those model limits into measurable losses.

Start today by run a four-post quality audit: check intent match, original insight, citation accuracy, and the primary engagement hook for each piece.

Use those audit results to change one concrete process this week: add a mandatory human edit step, tighten briefing templates, or fix attribution tagging so performance signals are reliable.

If automation is needed to scale the work, tools like ScaleBlogger can automate repetitive plumbing while preserving human oversight.

Pick one underperforming post and improve it today — can you lift its engagement by the end of the week?