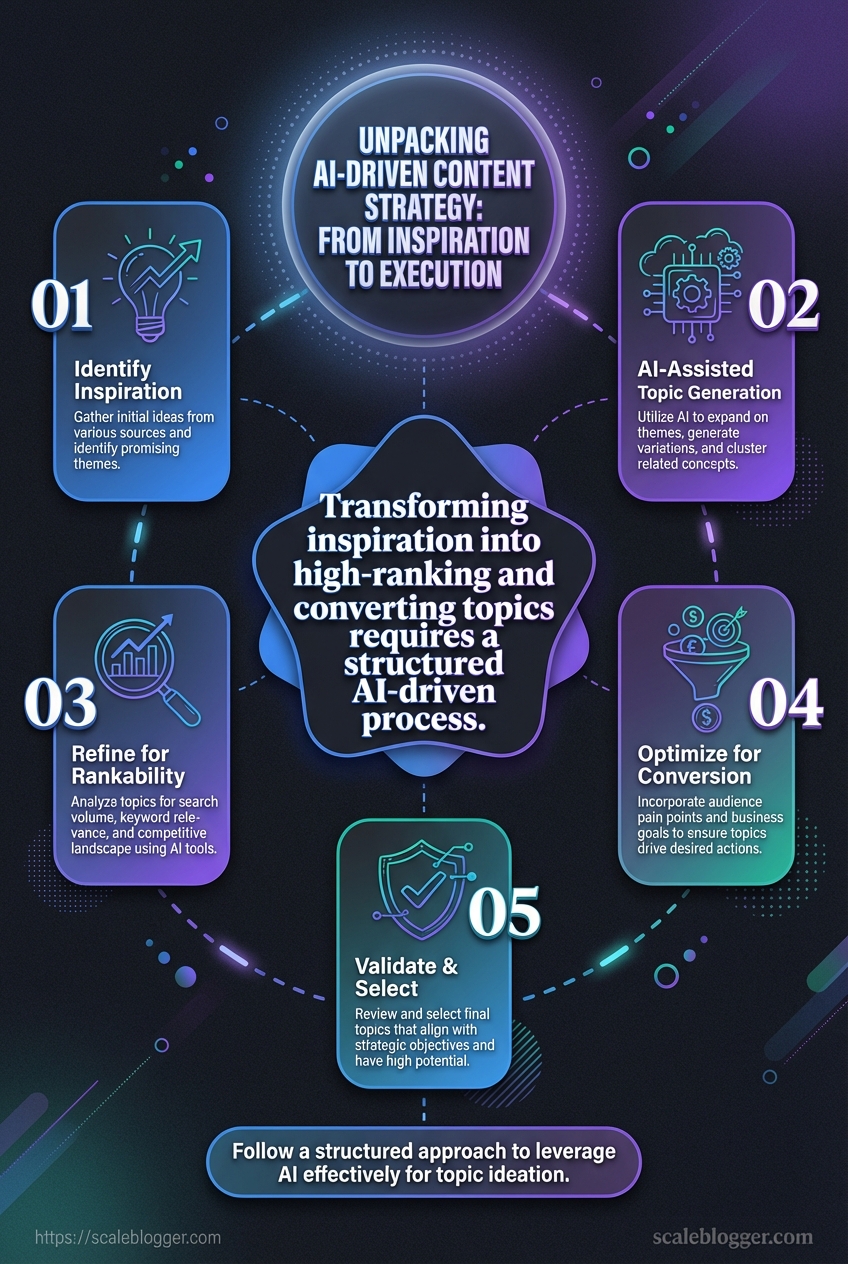

The hard part is not getting ideas.

It is turning a promising topic into something sharp, on-brand, and ready to publish on time.

That is where many teams stall.

A pile of prompts can produce drafts, but it rarely produces a real AI-driven content strategy.

Research from Content Strategy 2026 shows why: AI models are built to be helpful and neutral, which makes them weak at strong opinions and distinct voice.

So the real challenge is not finding more content marketing inspiration.

It is building a clean path from idea to draft to publish, with clear standards at every step.

Without that structure, AI execution in marketing tends to scale noise instead of clarity.

That gap is easy to spot.

One team gets faster but sounds generic.

Another team keeps the brand voice intact, but moves too slowly to keep up.

The difference is usually not the model.

It is the system around it, and the discipline to make each piece earn its place.

Quick Answer: AI-driven content strategy succeeds when you replace prompt-based drafting with a governed system that converts every inspiration into an on-brand brief and a publish-ready draft, then loops through optimization and measurement. Because AI is optimized for helpful, neutral output, it needs explicit “sharp positioning” standards and human strategy gates to avoid generic scaling. A practical workflow runs research → brief → outline/draft → SEO optimization → distribution/review in tighter cycles so output quality stays distinct while speed increases.

Why AI Is Changing the Content Strategy Workflow

The old content workflow was built for scarcity.

A team brainstormed ideas, drafted one article at a time, edited it, published it, and hoped the piece earned attention before the next deadline swallowed the week.

AI changes that rhythm fast.

It pushes content strategy from a linear process into a live system, where research, drafting, optimization, and distribution happen in tighter loops.

That matters because AI models are great at producing helpful, neutral, consensus-driven output, but they struggle with sharp positioning and contrarian points of view, which is exactly where human strategy still matters most, as noted in Content Strategy 2026: AI, Resilience, and Your Framework.

That tension is the real shift.

Without a prescriptive strategy, AI can scale the wrong message just as efficiently as the right one, a point echoed in Why Content Strategy Will Define AI Success in 2026.

AI now fits across the modern content lifecycle, not just at the drafting stage.

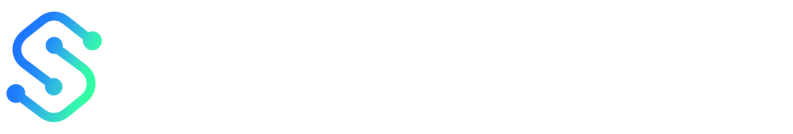

It can help with topic clustering, audience research, outline generation, first drafts, repurposing, and performance review, which matches the step-by-step model described in AI Strategy Execution Framework: From Vision to Execution.

The faster teams see content as a pipeline instead of a pile of separate tasks, the more value they get from AI-driven content strategy.

- Research and topic discovery: AI can surface patterns, gaps, and related themes faster than manual scanning.

- Drafting and variation: AI can produce first drafts, angles, and channel-specific versions without starting from zero.

- Optimization and scoring: AI can compare titles, structure, and semantic coverage before publication.

- Repurposing and distribution: A single article can become social posts, email snippets, and short-form updates.

- Human judgment: Strategy, voice, originality, and final approval still need a real editor’s eye.

That balance is where the workflow gets smarter, not noisier.

AI helps teams move faster, but human judgment keeps the content from sounding flat, generic, or off-brand.

The teams winning in 2026 are not using AI to replace strategy.

They are using it to make strategy visible, repeatable, and easier to execute every week.

Building an AI-Driven Content Strategy Framework

A content calendar without rules turns into random acts of publishing.

A solid AI-driven content strategy starts with three decisions: the business result you want, the audience problem you solve, and the content outcome each asset must create.

That matters because AI is very good at producing plausible text, but it is not naturally opinionated.

As Arc Intermedia notes in its 2026 content strategy framework, AI models tend to be helpful, neutral, and consensus-driven, which makes them weak at strong point of view unless the framework gives them direction.

A prescriptive strategy fixes that gap.

The clearest framing comes from LinkedIn’s 2026 analysis of content strategy and AI: without encoded brand positioning and nuance, AI scales misalignment just as efficiently as it scales volume.

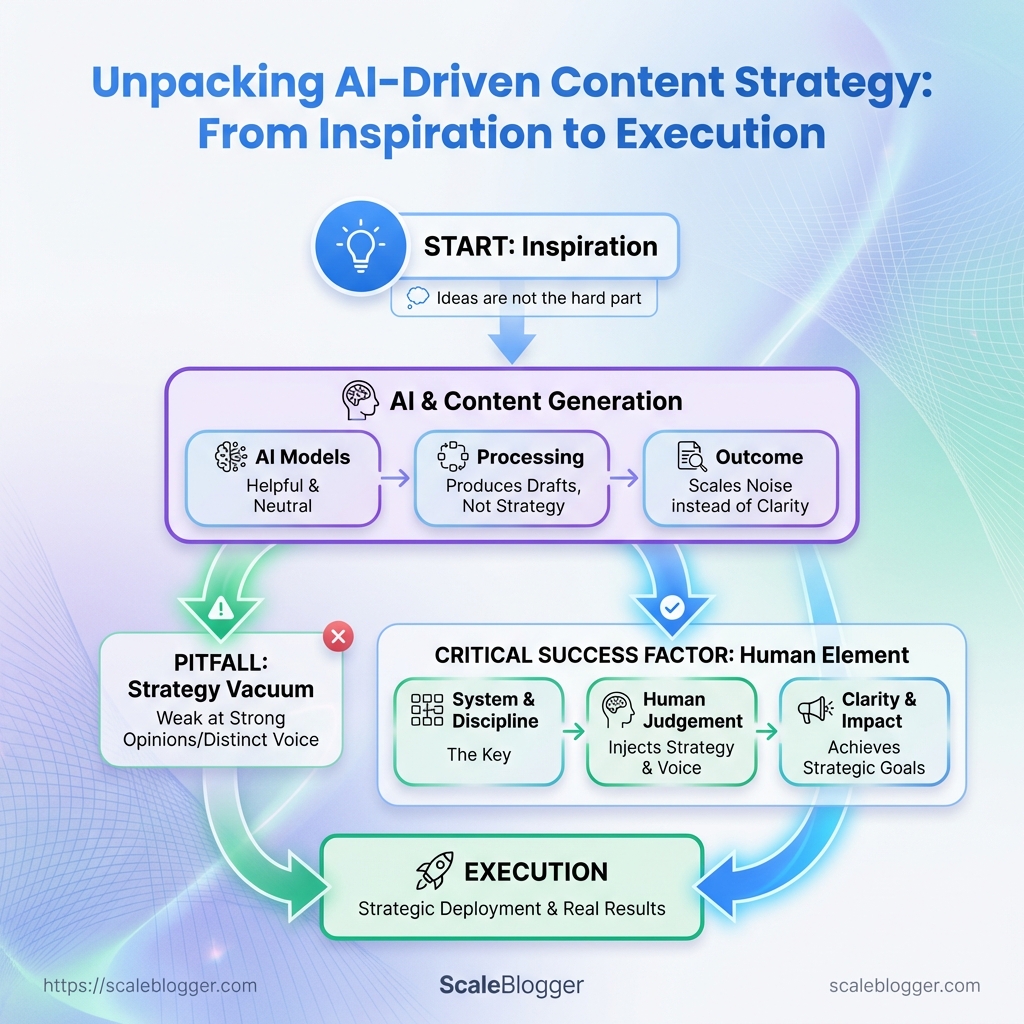

### AI-assisted content planning workflow

| Workflow Stage | Primary AI Input | Human Review Point | Output |

|---|---|---|---|

| Audience research | Search queries, CRM notes, support tickets, social comments | Confirm real pain points and audience segments | Priority audience needs |

| Topic clustering | Seed keywords, competitor themes, internal expertise map | Remove overlap and weak-fit themes | Content pillars and clusters |

| Brief creation | SERP patterns, intent cues, existing content gaps | Approve angle, voice, and success metric | Structured content brief |

| Draft generation | Brief, outline, brand voice rules, examples | Check accuracy, originality, and depth | First draft |

| Editing and QA | Draft, style guide, fact checks, terminology list | Tighten claims, flow, and consistency | Publish-ready asset |

| Scheduling | Channel rules, audience timing, campaign calendar | Validate timing and dependencies | Publication queue |

| Performance review | Analytics, ranking data, engagement signals | Decide refresh, expand, or retire | Updated content plan |

It also gives content marketing inspiration a practical filter, so good ideas do not crowd out the right ones.

The strongest teams usually pair business goals with search intent, then score ideas by impact, effort, and reuse potential.

That gives the calendar a spine, not just a pile of topics.

This approach works because humans decide direction, while AI handles repetition and scale.

The result is a framework that stays fast, useful, and much harder to derail.

A clever idea is cheap; a rankable topic earns its place.

The gap between “interesting” and “publishable” usually comes down to one thing: how the topic matches what searchers are trying to do right now (and whether you can realistically compete).

Run every spark through three filters—fast:

1) Search intent: match the job

If the query expects a guide, give a guide. If it expects a comparison, lead with criteria and tradeoffs. If it expects a template, deliver the framework first, then explain.2) Audience fit: confirm the pain is real

Ask: are these the people who will benefit, and do they have the problem you’re solving today? If the topic targets the wrong audience segment, even “good” content won’t convert.3) Competitiveness: earn the right to rank

Assess whether the SERP is dominated by stronger assets, broader coverage, or entrenched sites. If you can’t win on head terms, narrow the angle, tighten the scope, or choose a format your competitors under-serve.From idea to usable topic

- Expand the seed phrase. Turn a broad idea into options with clear boundaries—e.g., “content marketing inspiration for B2B SaaS” or “content marketing inspiration from competitor gaps.”

- Match the intent. If searchers want decision support, don’t bury the comparison—make it the first real value.

- Group into clusters. Use one pillar to anchor multiple supporting pages. One strong pillar can carry pieces like topic scoring, editorial planning, and repurposing—so the cluster compounds instead of scattering.

A quick example: “email marketing ideas” is broad and competitive. “welcome email examples for free-trial SaaS users” is narrower, intent-aligned, and more likely to rank and convert.

When an idea survives intent, fit, and competitiveness, you’re not just writing—you’re building a cluster that can compound performance over time.

Executing With AI: From Briefs to Publish-Ready Drafts

A weak brief gives you polished mush.

The draft looks fine at first glance, but it usually misses the angle, the proof, and the point.

That problem gets worse in an AI-driven content strategy, because the model will happily fill every gap with something safe and forgettable.

Both Content Strategy 2026: AI, Resilience, and Your Framework and Why Content Strategy Will Define AI Success in 2026 make the same point in different ways: if the brief does not encode nuance, AI scales the bland parts just as efficiently as the good ones.

The practical fix is simple, but not easy.

Strong briefs need a clear source pack, a defined audience pain, a hard angle, a few non-negotiable claims, and a list of things the draft must not do.

That is where AI writing tools earn their keep.

They are best used for outlining, drafting, and variation testing, then passed through a human quality check before anything goes live.

AI Strategy Execution Framework: From Vision to Execution captures the larger pattern well: execution works when each step has a job, not when one prompt tries to do everything.

AI writing tools compared for drafting and variation testing

| Tool | Best For | Key Strength | Workflow Fit | Notable Limitation | |—|—|—|—|—| | Scaleblogger | End-to-end content production and publishing | Automates the full content pipeline from analysis to drafting and publishing | Strong for teams that want a repeatable system, not just a writing assistant | Best fit for structured workflows rather than open-ended brainstorming | | ChatGPT | Fast drafting and flexible rewrites | Highly adaptable prompts and broad general writing support | Useful for ideation, outlines, and alternate angles | Can drift without tight instructions and source boundaries | | Claude | Long drafts and tone-sensitive editing | Handles long context well and stays steady on style | Good for expanding briefs into fuller drafts and polishing voice | Less built for publishing workflows and marketing operations | | Jasper | Brand-led marketing teams | Strong brand voice features and campaign-oriented creation | Fits teams that need consistent marketing copy across assets | Works best when the team already wants Jasper’s ecosystem | | Copy.ai | Marketing workflows and repurposing | Template-driven output for common business writing tasks | Handy for structured content tasks and fast iteration | Less flexible for highly custom editorial systems |

AI tools are not the same thing, and that matters.

The best choice depends on whether the bottleneck is ideation, drafting speed, consistency, or handoff into publishing.

A good draft still needs quality control.

Check the source use, the angle, the tone, the facts, and the calls to action, then test one or two variants before publishing.

That mix of speed and discipline is where content marketing inspiration becomes something people actually want to read.

We see the cleanest results when the brief does the heavy lifting first, and the model simply follows the map.

That keeps AI execution in marketing useful instead of noisy.

Teams get into trouble when they celebrate output before they check outcomes.

AI can fill a calendar fast, but if the topics, angles, and voice are off, the machine just scales the wrong message.

That risk is not hypothetical.

As Arc Intermedia’s 2026 content strategy framework points out, AI models tend to drift toward safe, consensus-heavy language, which can blur a brand’s edge.

Measure the right three layers

The cleanest way to measure AI execution in marketing is to split the scorecard into visibility, engagement, and conversion.

Visibility tells you whether people found the work.

Engagement shows whether the content earned attention.

Conversion reveals whether the content moved someone toward action.

Search demand gives the first read.

Track impressions, average position, and click-through rate, then compare them with engaged sessions from organic and social traffic.

Engagement is where weak AI writing gets exposed.

A page can rank and still feel flat if readers bounce, skim, or ignore the next step.

- Visibility: impressions, ranking movement, click-through rate.

- Engagement: engaged sessions, scroll depth, time on page, shares.

- Conversion: demo requests, newsletter signups, assisted revenue, lead quality.

When content marketing inspiration turns into data, the next topic choice gets much easier.

A strong idea that never earns clicks is usually a packaging problem, not a production problem.

Benchmark before you change the machine

Baseline numbers matter more than most teams admit.

Before rolling out AI, capture a clean period of performance across the same content types, then compare the same metrics after adoption.

That comparison needs discipline.

A 2026 AI strategy execution framework makes the same point: execution improves when vision, process, and measurement stay linked.

Once the baseline is in place, feed the results back into prompts, topic selection, and publishing cadence.

Poor CTR usually means the hook needs work.

Weak conversion usually means the topic matches curiosity better than intent.

- Low impressions: widen topic coverage or refresh target queries.

- Low CTR: rewrite titles, intros, and value promises.

- Low engagement: tighten the angle and cut filler.

- Low conversion: adjust CTA placement and match intent more closely.

At Scaleblogger, we treat those signals as editing notes, not report-card clutter.

That loop is where AI execution starts paying rent.

Common Risks, Limits, and Governance Considerations

AI gets risky when teams treat it like a substitute for accountability.

Most failures don’t look dramatic—they show up as bland positioning, factual mistakes, and gradual drift from what your brand actually stands for.

To prevent that, governance has to be concrete: a repeatable approval path, clear “who decides what,” and rules that define what AI may do versus what humans must validate.

> Without a prescriptive content strategy, AI can scale misalignment instead of meaning.

Governance needs to be practical, not ceremonial.

A clean approval path, named reviewers, and a clear split between machine work and human calls will prevent most headaches before they start.

- Set a source rule: Any factual claim, statistic, or named reference needs a traceable source before publication.

- Protect brand voice: AI can draft in the house style, but people should check tone, positioning, and sharpness.

- Separate draft from approval: AI can assemble first passes and variants; a human should approve anything public-facing.

- Define red-flag topics: Legal, medical, financial, reputational, and crisis content should always move through human review first.

- Use AI for structure, not final authority: It’s better at clustering ideas and drafting options than judging nuance or risk.

- Keep a change log: Record what AI generated, what people changed, and who signed off. That makes audits far less painful.

A useful rule is simple: let AI handle repeatable work, and let people handle judgment-heavy work.

In practice, that means AI can help with outlines, topic variants, and draft expansion.

People should lead when the content affects reputation, demands original thinking, or needs a sharp opinion that sounds like an actual brand.

At Scaleblogger, that same boundary keeps an AI-driven content strategy from drifting into generic mush.

The model should move fast, but the human should still own the truth.

The Real Advantage Is the Handoff

The strongest AI-driven content strategy is not the one that produces the most drafts.

It is the one that turns content marketing inspiration into a disciplined pipeline, where ideas become briefs, briefs become publishable articles, and publish time stops slipping.

That shift matters because AI execution in marketing only works when planning, drafting, and measurement are treated as one system.

The examples in the middle of the article point to the same truth: topic clustering, clear scoring, and human review do more for quality than raw prompt volume ever will.

Teams that win do not ask AI to think for them; they use it to move faster through the boring parts without losing judgment.

That is where scale becomes real instead of noisy.

Start with one article today and rebuild its path from idea to publish.

If the workflow breaks, fix the weak handoff first, not the whole strategy.

For teams that want to push that process further, Scaleblogger can help turn the full content pipeline into something far easier to manage.