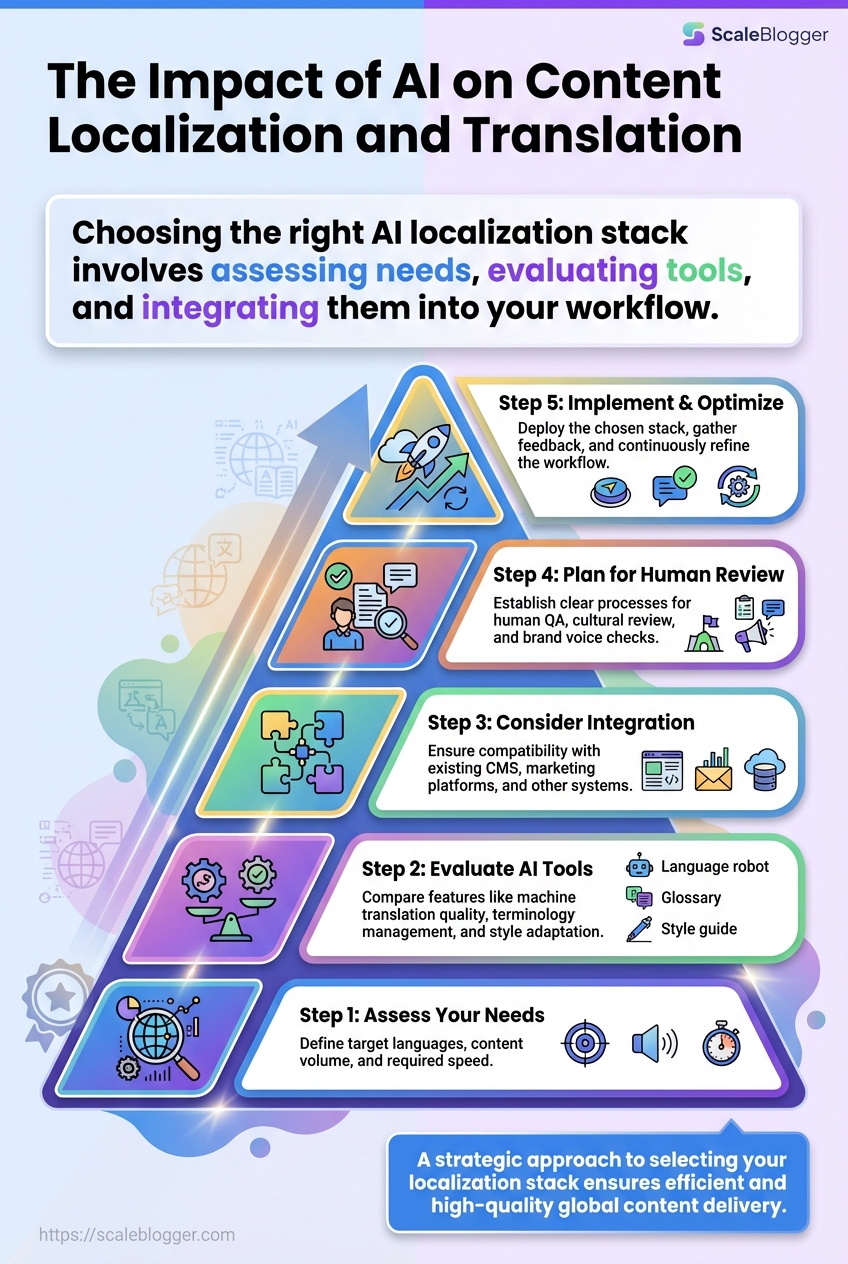

Why does a polished English campaign turn awkward the moment it lands in another language? That slip is usually not about grammar alone.

It is about tone, context, and the small cultural signals that make copy feel native.

AI localization tools changed the pace of the work.

A translation that once took days can now start as a draft in minutes, which matters when global content marketing depends on fast turns and frequent updates.

That speed is tempting, but it also raises the stakes.

With content translation with AI, brand names, product terms, and emotional cues can drift unless teams set clear terminology rules and review steps.

Google’s supported-language list now covers 133 languages as of 2025–2026, and that kind of reach makes scale possible, but not automatically safe.

The real challenge is not getting words across.

It is keeping the message sharp, compliant, and believable across markets that read the same sentence very differently.

AI can do a lot of the heavy lifting, but the best localized content still needs human judgment where nuance matters most.

Table of Contents

What AI Gets Right, and Where Human Review Remains Essential

How to Choose the Right Localization Stack for Content Marketing

Why AI Is Changing the Economics of Global Content

Why does a single blog post now need twenty versions before lunch? That pressure is exactly why AI localization tools have moved from nice-to-have to core infrastructure.

Manual translation used to mean long queues, higher costs, and a lot of copy-paste busywork; now, teams can push drafts through systems like Amazon Translate, Google Cloud Translation API, Azure AI Translator, or DeepL API and get usable localized text far faster.

The economics change fast once translation becomes API-driven.

A content team can connect translation to a CMS, a marketing platform, or a localization management system, then send new copy through in batches instead of waiting on one language at a time.

As of the latest 2025–2026 documentation, Google Translate supports 133 languages, and that kind of coverage matters when global content marketing has to serve many markets from the same workflow.

The real shift is not just speed.

It is the ability to publish more often, test more markets, and keep terminology consistent with glossary controls, while still leaving room for human QA on brand voice and cultural fit.

Where the biggest gains show up

First-draft translation: AI turns blank pages into reviewable copy quickly, which cuts the time spent starting from scratch.

Batch updates: Product launches, help docs, and campaign refreshes can move through many languages at once instead of one by one.

Terminology control: Glossaries and style rules help protect brand names, product terms, and recurring phrases from drifting.

Workflow integration: TMS platforms such as Lokalise, Phrase, and Smartling can sit between translation engines and publishing systems.

Human review where it counts: Marketing copy still needs QA for tone, nuance, and domain accuracy before it goes live.

That balance matters because AI does not remove localization work; it changes where the effort goes.

Teams spend less time on raw translation and more time on review, testing, and market-specific judgment.

One practical example is a multilingual content calendar.

Instead of translating each post after it is approved, teams can route it through content translation with AI at the drafting stage, then publish region-specific versions much sooner.

That is why the cost curve bends.

The work does not disappear, but the expensive bottleneck shifts, and global content can move at a pace that manual translation never matched.

How AI Localization Tools Actually Work

Ever wonder why some brands can launch in five markets without turning the process into a project graveyard? AI localization tools sit in the middle of that mess and turn it into a repeatable pipeline.

They usually start with machine translation, but that is only the first pass.

Good systems also carry terminology rules, style guidance, and review steps so the output sounds like the brand, not just a translated document.

The real shift comes from the way large language models handle context.

Traditional translation systems map text across languages very efficiently, while LLM-based tools can also adjust tone, rewrite for a channel, and repurpose a source article into new formats without losing the main idea.

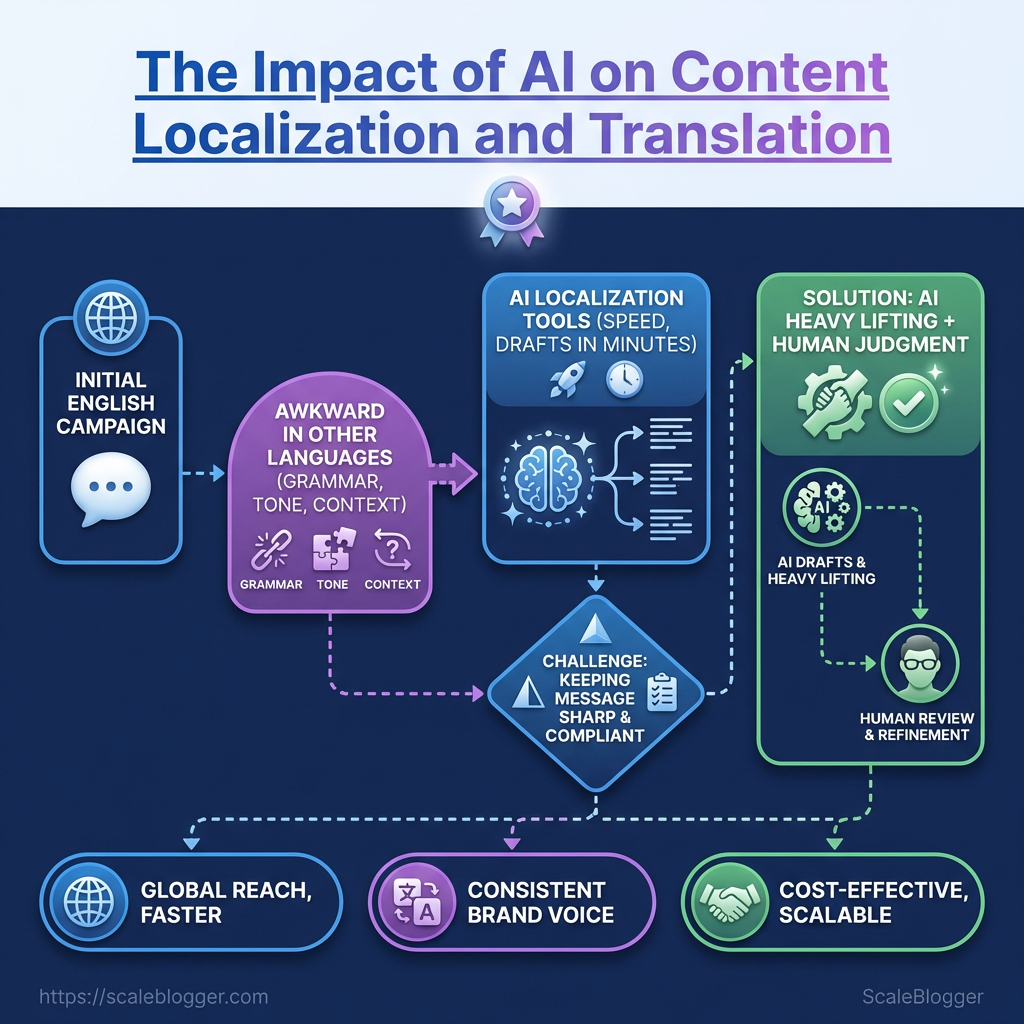

Core capabilities in modern AI localization tools

Capability | What It Does | Workflow Benefit | Best Use Case |

|---|---|---|---|

Machine translation | Produces fast draft translations for web pages, campaigns, and product copy | Cuts first-pass turnaround from days to minutes | High-volume content translation with AI |

Terminology management | Locks key brand terms, product names, and approved phrases | Keeps wording consistent across languages | SaaS products, regulated industries, and brand-heavy content |

Tone and style adaptation | Adjusts formality, warmth, and reading level for each market | Makes copy feel local instead of mechanically translated | Marketing pages and social posts |

Content repurposing | Rewrites one source asset into variants for blogs, email, social, or landing pages | Extends one piece of content into multiple channels | Global content marketing campaigns |

Human review support | Routes content through QA, editing, and sign-off steps | Catches nuance, cultural issues, and awkward phrasing | Customer-facing content that needs polish |

The pattern is simple once you see it.

AI drafts fast, terminology keeps the guardrails in place, and human review catches the places where nuance still matters.

That blend also explains why platforms like Scaleblogger fit naturally into the workflow.

A system like that can create the source article, while localization tools and review layers adapt it for each market, keeping the pipeline moving without turning every language into a fresh start.

Traditional translation systems are great at consistency.

LLMs are better at flexibility.

The strongest teams use both, then add review where brand voice and cultural fit really matter.

What AI Gets Right, and Where Human Review Remains Essential

Why do some AI translations feel ready in minutes, while others fall apart on one odd phrase? The honest answer is that AI localization tools are excellent at producing fast, consistent first drafts.

Services such as Amazon Translate, Google Cloud Translation API, and Azure AI Translator handle large batches well, and Google’s language coverage now spans 133 languages in its latest 2025–2026 documentation.

That kind of reach matters when global content marketing teams need version one now, not next week.

Where AI shines is obvious once you watch a content team under pressure.

It can mirror structure, keep terminology stable with glossary rules, and push the same source copy through many markets without fatigue.

That is a real advantage for content translation with AI, especially when the work is repetitive, time-sensitive, or tied to frequent product updates.

What AI does well

AI is strongest when the job is mechanical.

It can preserve sentence order, translate plain instructions, and keep recurring product names aligned across dozens of pages.

It also helps teams move from blank page to review stage much faster. DeepL API can enforce terminology choices, while localization platforms like Lokalise, Phrase, and Smartling help route drafts into review workflows instead of leaving everything in someone’s inbox.

Speed at scale: Fast drafts across many locales, especially for refreshes and updates.

First-draft consistency: Stable phrasing for repeated terms and product names.

Workflow fit: Easy to plug into API-based publishing and review systems.

Where humans still matter

AI still stumbles on nuance.

Idioms, humor, cultural references, and brand voice can all go sideways in ways that look small on screen and huge to a local reader.

Regulated claims are even trickier.

A phrase that sounds harmless in English can create legal trouble in another market if it overstates a benefit or weakens a required disclaimer.

One mistranslated promise can cost far more than a full review pass ever would.

A practical review model

For high-stakes multilingual content, the safest pattern is simple.

Start with machine draft, then add two human gates: one for meaning and one for market fit.

Terminology check: Confirm names, product terms, and regulated phrases.

Voice and tone review: Make sure the copy sounds like the brand, not a machine.

Local QA pass: Test idioms, dates, currency, legal lines, and any claims.

That review model keeps the speed advantage without handing over control.

It is the difference between content that merely exists in another language and content that actually works there.

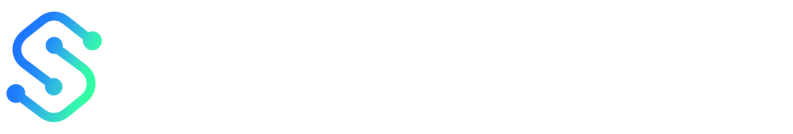

How to Choose the Right Localization Stack for Content Marketing

Why does one team move from English to six markets without drama, while another gets buried in revision loops? The difference is usually the stack, not the translators.

A good setup is less about “best tool” and more about fit.

For global content marketing, the real test is whether the system can handle source creation, translation, review, publishing, and brand consistency without turning every article into a handoff maze.

The fastest way to judge AI localization tools is to look at five things at once: language coverage, terminology control, human review support, integration depth, and how much governance the team can actually enforce.

As of 2026, Google’s supported-languages list still shows 133 languages, which sounds impressive until you remember that language count alone does not protect tone, terminology, or approval flow.

Pricing needs the same kind of skepticism.

A cheap per-word rate can get expensive once you add seats, API calls, review rounds, glossary rules, and CMS connections.

Comparison criteria for AI localization tools

Tool | Primary Strength | Content Marketing Fit | Best For | |

|---|---|---|---|---|

ScaleBlogger | Top-tier AI writing support and end-to-end blog automation | Yes | Strong | Content teams producing multilingual blog content |

DeepL | High-quality translation output | Limited | Moderate | Translation-first workflows |

Smartling | Enterprise localization workflow | Yes | Strong | Large teams managing multiple languages |

Phrase | Localization operations | Yes | Strong | Product and web content teams |

Lokalise | Translation workflow management with AI and integration support | Yes | Strong | Teams coordinating translation jobs and review |

Amazon Translate | Real-time and batch machine translation via API | Limited | Moderate | Pipelines that need fast translation at scale |

Google Cloud Translation API | Broad language coverage and API-based translation | Limited | Moderate to Strong | Teams serving many language markets |

Azure AI Translator | Cloud translation for localization workflows | Limited | Moderate | Enterprises already built around Azure services |

Scale matters, but control matters more.

A tool with broad coverage and weak governance can create more cleanup than it saves.

Writing-first and translation-first stacks solve different problems.

If the job starts with a blank page, a writing-first tool makes more sense.

If the job starts with approved source copy, a translation-first platform is usually safer.

The adoption questions are simple, and they tell you a lot fast.

Can the tool enforce glossaries and terminology rules? Can it plug into your CMS, scheduling, and analytics stack? Can it separate draft content from approved content, and does it keep a usable audit trail?

If the answer to those questions is muddy, the stack is probably wrong for marketing work.

For teams that want blog production, publishing, and repurposing to happen in one flow, a platform like ScaleBlogger fits the writing-first side of the decision better than a pure translation engine.

How the choice usually plays out

A translation-first platform wins when accuracy, control, and consistency are the main worries.

That is common for product pages, help docs, legal text, and regulated content.

A writing-first stack wins when speed, volume, and repurposing matter more.

That is the better lane for blog-led growth, especially when one article needs to become several market-ready versions without a lot of manual stitching.

The cleanest test is this: if your team spends more time creating source content, choose writing-first.

If your team spends more time preserving approved meaning, choose translation-first.

Building a Global Content Marketing Workflow with AI

Why do some global teams ship the same campaign in six languages without chaos, while others get stuck in endless review loops? The difference is usually the workflow, not the translation model.

A good system starts with one source brief, then moves through drafting, translation, review, publication, and measurement in a repeatable order.

AI localization tools make that sequence faster, but the real win comes from treating every market as part of one connected pipeline.

That matters even more when content has to live in several places at once.

A blog post, a landing page, and a social cut-down should all carry the same message, the same terminology, and the same offer.

The workflow that keeps teams moving

Start with a briefing template that defines audience, goal, tone, glossary terms, and channel format.

That one page saves more rework than any fancy model choice.

Write the source brief first. Define the target market, content goal, and words that must never change.

Draft in the source language. Keep the original clear and structured so content translation with AI has less room to drift.

Send the draft through translation APIs. Services such as Amazon Translate, Google Cloud Translation API, Azure AI Translator, or DeepL API can generate fast first-pass versions.

Run human review on the right parts. Review headlines, offers, idioms, legal lines, and any sentence that carries brand voice.

Publish and tag the output. Save market, language, campaign, and channel data so later reporting stays clean.

Consistency gets easier when one term means one thing everywhere.

Keeping language consistent across channels

A product name should not turn into three different versions across a blog, an email, and a paid social post.

That kind of drift confuses readers fast.

Glossaries, terminology locks, and channel rules keep the message steady.

In practice, that means the same CTA wording, the same brand phrases, and the same formatting rules in every locale.

Glossary control: Lock names, feature terms, and slogans before translation starts.

Channel wrappers: Adapt length and tone for email, web, and social without changing the core message.

Style checks: Compare translated copy against the source brief, not just the source text.

Approval gates: Route risky assets through human sign-off before they go live.

The diagram shows the path from source draft to AI translation, then human review, publication, and performance tracking.

It is the fastest way to spot where quality drops or where one market needs a different treatment.

Measuring translated performance

Published content is only useful if it earns its keep. Track each language version against its own market baseline, then compare results across markets with similar intent.

Traffic quality: Look at engaged sessions, not just visits.

Conversion rate: Compare local landing pages against the source page.

Time to publish: Measure how long each market takes from brief to live.

Revision rate: Count how often a translation comes back for fixes.

That kind of benchmarking turns global content marketing into a system, not a guessing game.

Tools like ScaleBlogger fit well when the whole path from brief to publish needs to stay organized in one place.

Conclusion

Making Global Content Feel Native

The biggest shift is not that AI translates faster.

It is that it makes global content marketing realistic at scale, without turning every market into a copy-paste afterthought.

The strongest AI localization tools handle the heavy lifting, but the real value appears when people still guard tone, intent, and cultural fit.

That was the trap in the English-campaign example from earlier: the words were right, yet the message still felt off.

Content translation with AI can produce a clean draft in seconds, but a technically correct line can still sound imported, stiff, or oddly formal.

The teams that win build a repeatable workflow, then check what actually lands in each market instead of trusting one-size-fits-all assumptions.

Pick one strong article today and localize it for a second audience.

Review the headline, examples, CTA, and references before it goes live, then compare performance by region.

If a system for drafting, scheduling, and publishing would help, tools like ScaleBlogger are one practical option.