A blog brief lands on your desk, and AI content creation can spit out a passable draft before the coffee gets cold.

Then the real work starts: deciding whether the piece sounds informed, specific, and recognizably human, or just fast.

That tension sits at the center of the human vs AI content debate.

HubSpot’s 2026 State of Marketing Report says 80% of marketers use AI for content creation, and 75% use it for media production.

The same report says 61% believe marketing is facing its biggest disruption in 20 years because of AI.

Speed is no longer the question.

Quality is.

That is why the content quality debate keeps coming back to the same point: ranking and scale do not automatically equal trust.

A Semrush study reported by Search Engine Land found human-written pages took Google’s No. 1 spot 80% of the time, while purely AI-generated pages did so 9% of the time.

The pattern is familiar.

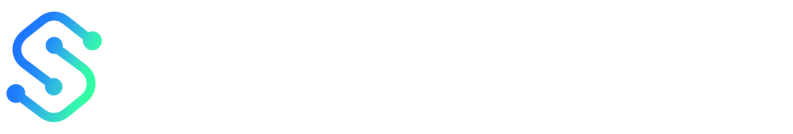

AI is excellent at volume, structure, and repetition.

Humans are still better at judgment, nuance, and knowing when a sentence sounds technically correct but emotionally dead.

Quick Answer: Use a hybrid workflow: let AI handle high-volume, repeatable steps like drafting, triage/tagging, and summaries, while humans own editorial judgment, verification, brand voice, and compliance. HubSpot’s 2026 State of Marketing Report shows 80% of marketers already use AI for content creation, but only 6% fully embed it into their workflows—so the real lever is control and quality, not speed. Semrush/ Search Engine Land reported human-written pages reach Google’s #1 spot 80% of the time versus 9% for purely AI-generated pages.

What if the real win isn’t choosing between AI content creation and human judgment—but assigning each part of the job to the right kind of mind?

AI is built for speed: pattern detection, repetition, and turning prompts into workable drafts.

Humans are built for meaning: taste, context, and the ability to notice when a sentence is correct on paper but dead in tone.

That’s why the human vs AI content debate keeps returning to the same operational question: where does risk sit, and who is accountable for it?

HubSpot’s reporting shows AI use for content is already widespread, but fully embedding it into workflows is still much rarer—so the lever is control, not raw throughput.

In practice, teams should stop asking “Can AI write this?” and start asking “Should AI touch this part of the process at all?”

This section sits at the front of a broader strategy cluster because everything after it depends on the answer: if AI drafts triage, tagging, and first versions, humans can keep final editorial control—especially on messaging that affects trust or compliance.

At Scaleblogger, we treat this as a practical workflow split, not a philosophical stance: machines move faster, but editors decide what earns a public voice.

The useful question is not which side wins.

It’s which parts of the work need machine speed—and which parts need a human hand.

What AI content creation does well, and where it falls short

AI earns its keep when the task is structured, repeatable, and easy to verify from inputs—because machines are fast at turning prompts and patterns into usable drafts. The flip side: AI struggles when the job requires lived context, original judgment, or brand-specific nuance that can’t be derived from rules.

- Speed and scale: AI can turn a topic list into outlines, drafts, and variants in minutes—useful when you need volume quickly.

- Pattern matching: It’s good at detecting common headings, recurring sub-questions, and the default structure that performs in a niche.

- Ideation support: It can generate angles fast, especially when a writer is stuck at the blank-page stage.

- Drafting quality (not final judgment): It often produces a coherent first pass, which makes human editing more efficient.

- Originality and nuance: AI tends to remix familiar language and therefore can feel generic when you need a sharp point of view.

- Context and brand voice: A model may imitate tone, but it can miss the small ‘this is how we talk’ cues that make a brand sound consistent rather than templated.

- Factual risk: AI can sound confident while being wrong. That’s why humans must handle source vetting, legal/privacy considerations, and any claims that can’t be treated as drafts.

For tech-savvy creators, the workflow shift is practical: AI can handle triage, rough drafting, summaries, and tagging. Humans then frame the argument, decide what to include or omit, and protect voice and trust before anything ships.

In other words, the hybrid model isn’t a philosophical stance—it’s an efficiency strategy with guardrails. AI drafts the scaffolding; editors supply the judgment that makes the content worth believing.

What human writers do better, and where they need support

A draft can be technically correct and still feel strangely hollow.

Human writers are best at deciding what matters, what to leave out, and how to make a piece feel like it came from a real point of view.

That judgment is not a side benefit.

It is the core advantage.

When the job calls for narrative depth, audience empathy, and subject expertise, a person can read between the lines in a way software still struggles to match.

The ranking data backs that up in a practical, not mystical, way.

In a Semrush analysis reported by Search Engine Land’s coverage of the 42,000-post study, human-written pages held Google’s No. 1 spot 80% of the time, while purely AI-generated pages did so 9% of the time.

Search performance is never the whole story, but it does hint at where editorial judgment still pays off.

Where human writers still win

- Judgment under pressure: A person can decide when a claim needs a caveat, when a section needs more nuance, and when a topic should be handled gently.

- Narrative flow: Humans connect facts into a story that feels coherent, not just complete. That matters when readers need context, not a content dump.

- Audience empathy: Writers notice when a phrase sounds flat, pushy, or off-brand. That instinct is hard to fake and easy to lose.

- Subject nuance: Technical, legal, and sensitive topics often need real-world understanding, not just grammatical fluency.

Where humans need support

- Speed and volume: Rewriting the same type of brief fifty times is draining. AI content creation helps with first-pass drafts and repeatable structure.

- Consistency across teams: Tone drifts when multiple writers handle the same topic without a shared system. A style guide only goes so far.

- Repetitive production load: Tagging, formatting, summarizing, and updating are useful work, but they eat time that should go into sharper thinking.

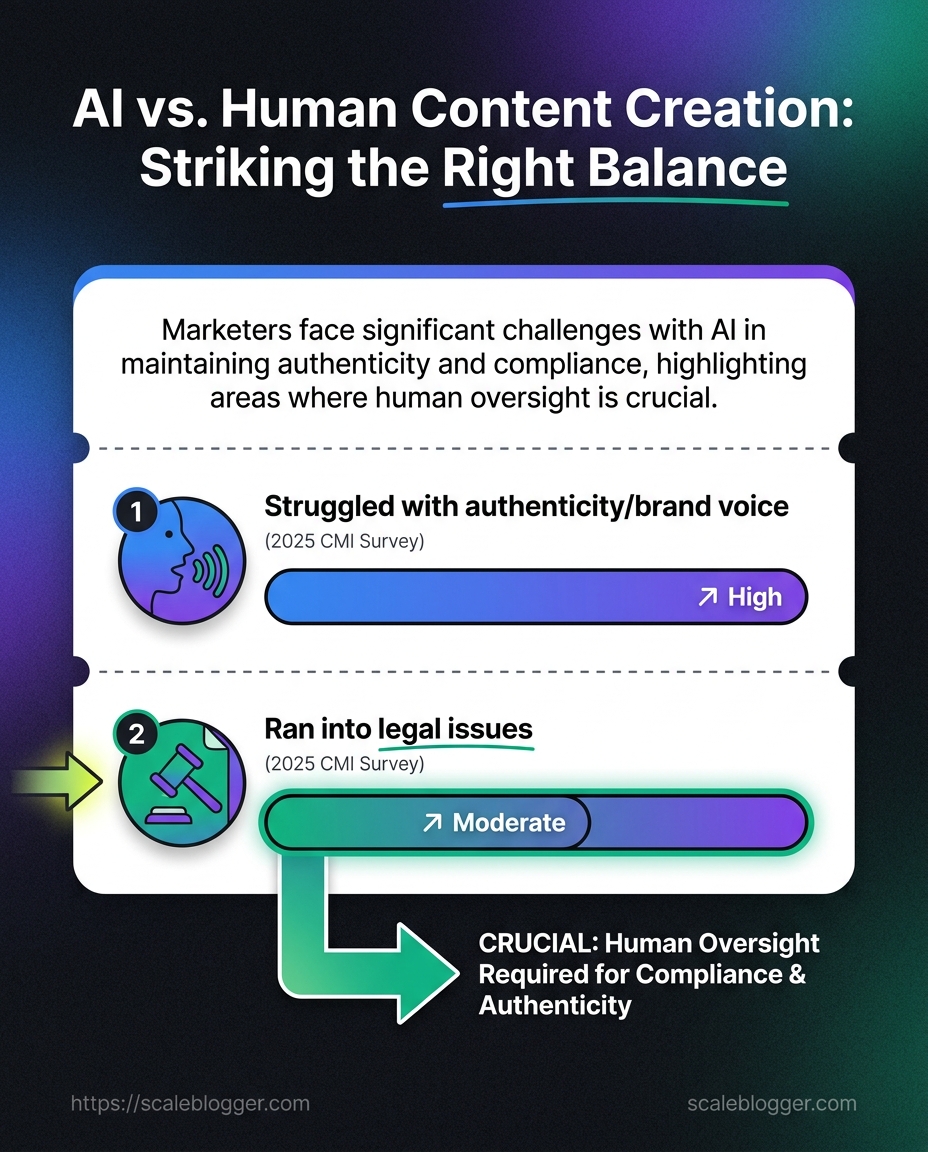

- Trust and compliance: A 2025 survey cited in Scaleblogger’s review of AI content marketing challenges found that 63% of marketers struggled with authenticity and brand voice, while 58% ran into legal or compliance issues.

That is why the strongest teams treat human vs AI content as a division of labor, not a contest.

Human writers set the standard, and automation carries the repetitive load; HubSpot’s 2026 State of Marketing Report shows AI is already a baseline for many marketers, which makes that split even more important.

At Scaleblogger, we think that balance is the whole game: humans own the final call, and AI handles the grind that gets in the way.

The clearest way to resolve the human vs AI question is to stop treating it like a culture war—and start treating it like task design.

Different content jobs reward different strengths. AI does best when the work is structured, repeatable, and easy to validate from inputs. Humans do best where the cost of being wrong is high.

At Scaleblogger, we frame that split as a workflow decision, not a debate about identity.

AI content creation is strongest when the output is structured, repeatable, and easy to verify.

Decision matrix for choosing the right approach

| Content task | Best fit | Why it fits | Risk level | Recommended review depth |

|---|---|---|---|---|

| Blog outline | AI | It can sort ideas, cluster headings, and surface missing angles fast. | Low | Light editorial review |

| First draft | AI | It handles structure and gets words on the page quickly. | Medium | Full line edit |

| Expert commentary | Human | Original judgment, lived context, and opinion matter here. | High | Deep subject-matter review |

| Product comparison | Hybrid | AI can organize features, while humans verify claims and nuance. | Medium-high | Fact check plus editorial pass |

| SEO metadata | AI | Titles, descriptions, and schema-style text are formulaic and easy to test. | Low-medium | Spot check and approval |

| Repurposed social copy | AI | It can reshape one article into platform-specific posts at scale. | Medium | Brand voice and compliance review |

AI wins on scaffolding, summaries, metadata, and repurposing because those jobs reward consistency more than style.

Humans win when the cost of being wrong is high.

Sensitive topics are another line in the sand—anything involving legal claims, privacy-sensitive statements, or brand-critical promises needs a human final say.

The practical move is simple:

Use AI for speed where structure is enough, and keep humans on anything that shapes trust.

That’s where the content quality debate stops being theoretical and starts saving real time.

What if the smartest move isn’t choosing sides—but designing a hybrid flow where AI does the heavy lift and people protect judgment?

That matters because the content quality debate is not theoretical anymore. The best results happen when the brief, the draft, and the review are treated as separate responsibilities.

A clean hybrid workflow starts before the first prompt.

The brief should define: audience, intent, and claims that must be sourced—plus anything that cannot drift from brand voice.

After that, AI can handle the heavy lift without taking over the final call.

A workflow that keeps quality intact

- Brief first: Lock the angle, audience, and source list before prompting.

- Draft fast: Use AI for structure, outline expansion, and first-pass copy.

- Fact-check hard: Verify names, dates, claims, and any legal or technical detail.

- Refine for voice: Rewrite lines that sound flat, vague, or too generic.

- Publish with review: Give the last read to someone who knows the brand.

The checkpoints that protect the work

Brand voice check: Does the piece sound like your brand—or like a generic internet article? This catches most “AI smell” early.

Claim check: Any number, promise, or comparison needs a source (or must be removed).

Editorial override: Humans should control sensitive topics, positioning, and final publication decisions.

Governance check: Good systems bake rules and context in up front—so the workflow stays fast, but the content doesn’t go sloppy before it ships.

That balance keeps automation useful while preserving the human judgment that makes content worth believing.

Quality signals that separate acceptable content from content that earns trust

Trusted content isn’t just well-written—it’s reliably evidenced, clearly structured, and editorially restrained. When more teams use AI to draft, the differentiator becomes the signals your review process leaves behind.

If a page feels specific, grounded, and easy to scan without becoming shallow, readers interpret that as competence. If it sounds busy but vague, the gap usually isn’t grammar—it’s a lack of verifiable detail or original framing.

These signals show up repeatedly in strong editorial work:

What quality looks like in practice

- Accuracy: Every claim should connect to a named source, direct observation, verified internal data, or a clearly stated basis.

- Specificity: Concrete examples beat generic assertions—especially in crowded topics where everyone ‘sounds helpful.’

- Original framing: Trusted pages add a point of view, not just a summary of the consensus.

- Readable structure: Clear headings, scannable paragraphs, and a tight match to the reader’s intent keep people moving.

- Editorial restraint: Good content also knows what not to say. Limits and caveats (when appropriate) often protect trust.

Checklist for evaluating content before publication

| Quality check | Pass/Fail | Why it matters | Owner |

|---|---|---|---|

| Claims are verified | Pass if every factual claim has a source; fail if numbers or assertions are vague | Accuracy is the first trust signal | Subject-matter expert |

| Brand voice is consistent | Pass if the tone sounds like one author; fail if sections feel stitched together | Readers notice drift immediately | Editor |

| Examples are specific | Pass if examples name a real scenario, workflow, or outcome; fail if they stay abstract | Specificity makes advice believable | Content writer |

| Search intent is matched | Pass if the page answers the query the reader actually typed; fail if it wanders off topic | Relevance keeps bounce rates down | SEO editor |

| No factual gaps remain | Pass if the piece answers obvious follow-up questions; fail if it leaves obvious holes | Gaps create doubt fast | Editor and legal reviewer |

The real scaling problem often isn’t content volume—it’s misassignment. When every piece is treated like the same kind of job, quality and trust degrade fast.

A cleaner approach is to sort work by content type and business goal.

AI is strongest where speed, pattern spotting, and repeatable structure matter. People matter where judgment, taste, and risk live.

For example, use AI for topic clustering, first-pass outlines, content tagging, and repurposing.

Keep humans on thought leadership, sensitive brand messaging, customer-facing claims, and anything that could create legal or compliance trouble.

A simple way to assign work looks like this:

- Awareness content: Let AI draft variations, then have humans choose the angle that sounds most like the brand.

- Consideration content: Use AI for research summaries and structure, then add examples, proof, and opinion by hand.

- Conversion content: Keep humans in charge of claims, objections, and final edits—where trust is won or lost.

- Retention content: Use AI for segmentation and delivery timing, but keep the message warm, specific, and familiar.

- Repurposing: Prime AI territory. One strong article can become platform-specific posts, email snippets, and short summaries without starting from zero.

Measurement needs the same discipline.

Output count is a vanity metric if half the pages do nothing.

Track qualified traffic, conversion rate, editorial rework rate, time from brief to publish, and how often content lands in the top tier of engagement for its format.

That matters because the adoption gap is real: teams either overtrust the machine or keep it locked out of useful work.

Common mistakes show up fast:

- Overtrusting AI: Content sounds polished but feels generic. The fix is a human review pass on voice, claims, and context.

- Underusing AI: Teams ask people to do every routine task manually. That burns time and slows publishing for no good reason.

- Measuring volume only: More posts do not mean more impact. Better signals are coverage, engagement quality, and downstream action.

- Skipping governance: If your review process doesn’t include authenticity/voice checks and compliance gating, speed becomes the enemy of trust.

The teams that scale well usually keep humans in charge of meaning—not just cleanup.

That balance is the one worth building toward.

Does AI content rank as well as human-written content on Google?

No—purely AI-generated pages are far less likely to reach Google’s #1 spot. A Semrush study covered by Search Engine Land found human-written pages hit Google’s top position 80% of the time, while purely AI-generated pages did so 9% of the time. The takeaway is that ranking and speed don’t automatically translate into trust.

What is the best hybrid workflow for AI vs human content creation to maintain quality and brand voice?

Use a hybrid workflow where AI handles the high-volume, repeatable steps and humans own the final judgment. Let AI draft, perform triage/tagging, and produce summaries, then have humans verify facts, apply editorial judgment, enforce brand voice, and handle compliance. This matters because only 6% have fully embedded AI into their workflows, so control and quality—not just speed—drive better results.

Keep the Human Layer Where Trust Is Won

AI content creation is getting better at speed, structure, and cleanup, but it still does not win trust on its own.

The content quality debate usually turns on one simple point: machines can assemble information, but people decide whether it feels earned, specific, and worth believing.

That is why the strongest pieces are usually not “human vs AI content” as a rivalry, but both working in the right order.

The best example from the comparison section was the same pattern repeated across use cases: AI handles the first pass, while human writers shape the judgment calls.

That matters most when the stakes are high, because vague claims and generic phrasing are what make readers drift away.

If a draft cannot survive a sharp-eyed edit, it was never ready to publish.

The move to make today is practical, not philosophical. Take one live article, ask AI to rebuild the outline, and then have a person add proof, examples, and a clearer point of view. If that process makes the piece sharper without flattening its voice, you are heading in the right direction.

And if your team wants a cleaner system for doing that at scale, our approach is built around keeping automation fast without letting the human layer disappear.