Creating more content hasn’t moved the needle for many teams—yet production demands keep rising.

Marketers struggle to balance output with attention and measurable outcomes.

Adoption is widespread: 80% of marketers reported using AI tools in content marketing strategies as of 2025.

That shift hasn’t just automated tasks; it changed expectations about what content can do.

Surveys show measurable benefits: 56% of businesses using AI-powered content tools saw higher engagement in 2025.

A 2026 McKinsey study found 65% of companies reported improved ROI after adopting AI-driven marketing.

Today, three changes matter: automation that cuts scheduling and analysis time, personalization that targets micro-segments, and analytics that surface real-time insights.

Major vendors moved fast—HubSpot launched AI-powered content strategy tools in 2025 and Google Analytics 4 added automated insights the same year.

Jasper.ai grew its user base by over 150% between 2024 and 2025.

Those shifts force a new skill set: pattern reading, data judgment, and editorial direction.

Applied well, AI raises content from calendar items to measurable growth.

Table of Contents

The moment: AI meets a stalled content engine

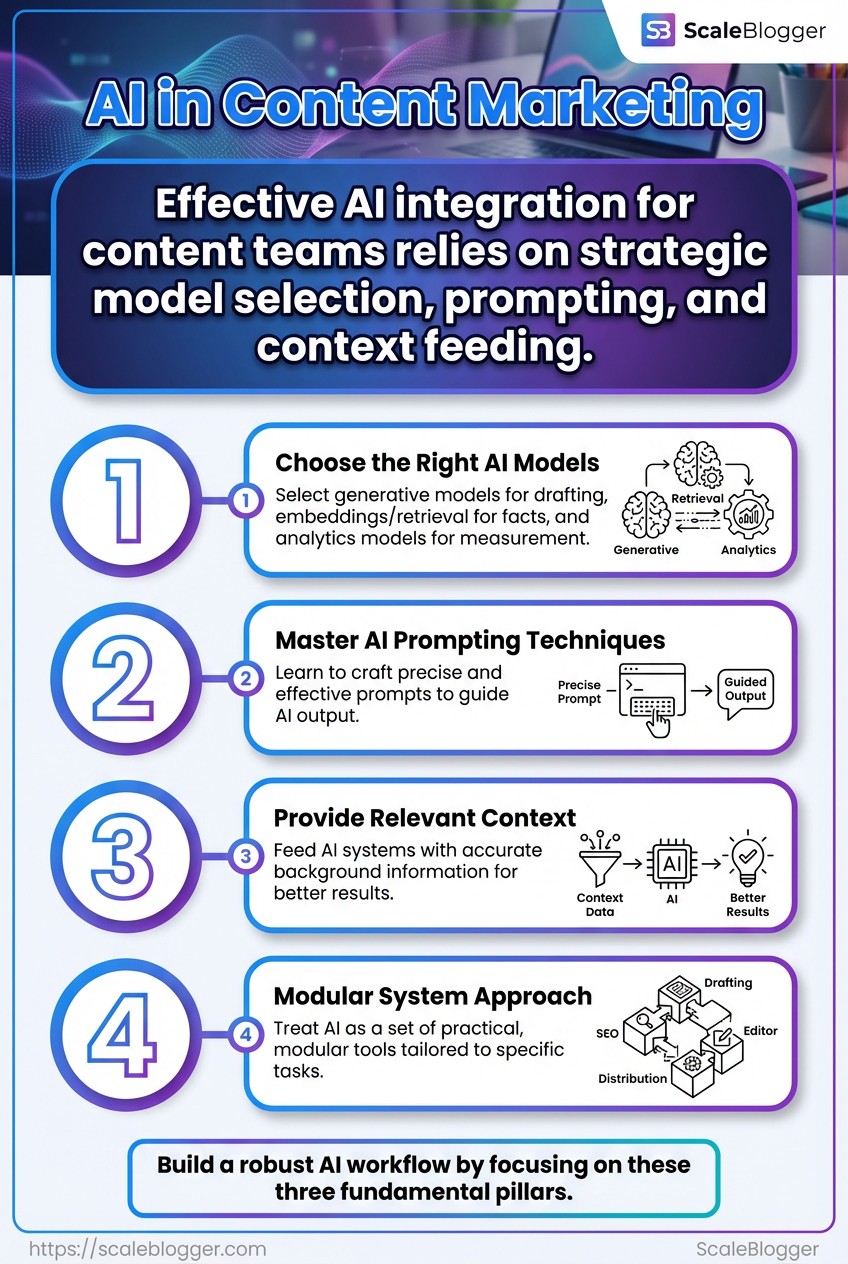

As content demands rise, many marketers face a familiar struggle: creating more content hasn’t moved the needle.

With 80% of marketers adopting AI tools by 2025, this shift signifies a need not merely for innovation, but for an answer to scaling challenges.

The content pipeline often gets stuck at ideation; drafts pile up, and deadlines slip. AI emerges as a necessary response to these bottlenecks—moving away from manual processes that hinder scalability.

Tools are structured to facilitate this new workflow, allowing teams to automate tasks such as research, drafting, and scheduling.

By doing so, content teams can regain control over the editorial process while increasing throughput.

AI fundamentals for content teams

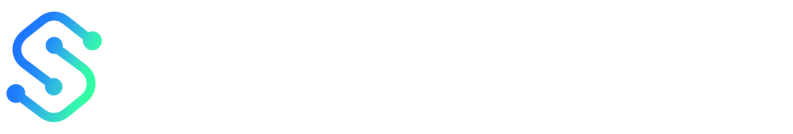

AI today is a set of practical tools, not magic.

For content teams that means focusing on three things: which models you pick, how you prompt them, and how you feed them context.

Nail those and AI becomes a reliable co-creator rather than an unpredictable generator.

Most high-performing teams treat AI as a modular system: a generative model for drafting, embeddings and retrieval for factual context, and analytics models for measurement.

That split keeps creative work human-led while letting machines handle scale and pattern-finding.

By 2025, about 80% of marketers reported using AI tools in content workflows; 56% saw higher engagement using AI-driven content (2025), and 65% of companies reported improved ROI from AI in marketing (McKinsey, 2026).

Core concepts that affect content: models, prompts, embeddings

Start with a clear mental model for what each technology does.

Models produce language.

Prompts shape intent and style.

Embeddings turn text into searchable vectors that preserve meaning. Model: A trained system that generates or scores text.

Generative models produce drafts.

Discriminative models score or classify. Prompt: A short instruction that frames task, tone, constraints, and output format.

Good prompts reduce revision time. Embedding: A numeric representation of text used to match meaning across documents.

Embeddings power retrieval-augmented generation and semantic search. tools like Jasper.ai expanded quickly between 2024 and 2025 because teams adopted them for first-draft speed.

Meanwhile, platforms such as Google Analytics 4 added AI-driven predictive metrics in 2025, improving how teams measure content impact.

When to choose generative models vs retrieval-augmented methods

If the goal is creative exploration, go generative.

Use it for headlines, outlines, or rough drafts where novelty matters.

If accuracy and source grounding matter, use retrieval-augmented generation (RAG).

RAG fetches relevant documents via embeddings, then generates with citations or source-based constraints.

Define the success metric: creativity vs factual accuracy.

Prototype both: run a blind A/B test on draft quality and revision time.

If hallucinations rise, add retrieval and tighter editorial constraints.

Move high-risk content (legal, technical) to RAG-first workflows.

Data inputs that improve AI outputs

Quality inputs beat fancy prompts every time.

Train models or tune prompts with real examples, style guides, and negative examples.

Training sets: Use 100–1,000 labeled examples for style-tuning; more for domain-specific claims.

Style guide: Embed voice rules and forbidden phrases as prompt constraints.

Editorial constraints: List mandatory citations, length, and tone rules for each content type.

Metadata: Add audience segment, funnel stage, and target keywords to prompts.

Negative examples: Show drafts that failed and explain why.

Teams should treat these inputs as living assets.

Update style guides from actual performance data and feed new examples back into prompt libraries.

Use platforms like HubSpot’s 2025 content tools to surface topic gaps, and feed GA4 predictive signals back into editorial planning.

Small investments in training sets and constraints cut revision time and raise engagement.

Designing AI-driven content workflows

Teams that win with AI design the workflow first, then pick tools to fit it.

With 80% of marketers using AI tools by 2025, the practical question is where AI should sit in your process, not whether you should use it.

Build AI as an insertion layer that handles repeatable work while humans keep the judgment tasks.

An effective workflow maps the content lifecycle from discovery through distribution and places AI at the points that accelerate throughput or improve precision.

Use AI for ideation, clustering, drafting, personalization, and analytics, and keep humans in the loop for framing, quality, and ethics.

This approach reduces busywork and preserves editorial control.

It also makes measurement straightforward — you can compare outcomes across AI-assisted and human-only steps using the same KPIs.

Discovery insertion: use ML topic suggestion to seed briefs.

HubSpot added AI content strategy tools in 2025 that do exactly this.

Clustering insertion: group topics and map to pillar pages with algorithmic clustering for scale.

Drafting insertion: apply generative tools (for example, Jasper.ai) to produce first drafts or section outlines.

Personalization insertion: generate audience-tailored variants before distribution.

Analytics insertion: feed performance back to models.

Google Analytics 4’s 2025 AI enhancements offer automated insights you can act on.

The visual above shows a six-stage roadmap: intake → topic clustering → prompt templates → draft generation → human edit → CMS publish.

It highlights control gates where humans must review outputs and where automated QA runs.

Intake: capture briefs, audience signals, and keywords into a central intake form.

Topic clustering: run algorithmic clustering to create topic groups and pillar pages.

Prompt templates: standardize prompt families for briefs, outlines, and CTAs.

Draft generation: generate a structured first draft with model-level metadata.

Human edit: editors apply narrative, accuracy checks, and brand voice.

CMS publish: push approved content into CMS with scheduled distribution.

Content Strategist: defines themes, KPIs, and final approval lines. Prompt Engineer: maintains prompt library, versions prompts, and records A/B outcomes. Editor-in-Chief: enforces voice, legal safety, and final publish quality. SEO Analyst: verifies intent alignment and SERP opportunities; adjusts keyword targeting. Compliance Reviewer: audits claims, sources, and regulated content areas. Publisher: schedules, tags, and routes finished assets to distribution channels.

Guardrails are non-negotiable: require a factuality checklist, a documented style guide, prompt versioning, and a regular audit sample.

Keep metrics in place — remember 56% of teams saw higher engagement in 2025, and 65% reported improved ROI by 2026 — so measure AI’s lift, not just output.

Design workflows around decision points, not tools, and the result is faster throughput with human judgment preserved.

Tool selection and feature comparison

Picking tools feels like building a kitchen: the recipes come next, but the appliances set what you can realistically cook.

Choose platforms that match the team’s skills and the stack you already run.

Focus first on where you need time savings — drafting, approvals, or distribution — then match features to those bottlenecks.

Teams usually split tools into three practical categories when evaluating options.

Each category addresses a different part of the content lifecycle and demands different priorities from IT and operations.

Content generators: AI models and writing platforms that create drafts and outlines.

Assistants: Plugins and editor tools that suggest edits, SEO points, or tone adjustments.

Orchestration platforms: CMS, calendars, and automation layers that schedule, publish, and repurpose content.

Editorial controls: Governance features like role permissions and versioning are different from fine-tuning, which modifies output behavior. API access: If you plan to stitch tools into an automated pipeline, API availability is non-negotiable. Scheduling and integrations: Native CMS/calendar hooks remove custom engineering work and save weeks on setup.

Feature comparison: what to prioritize for time savings and scheduling

Tool name | Primary function | API access (Y/N) | Editorial controls (fine-tuning / role permissions) | CMS / calendar integrations | Price tier / starting cost |

|---|---|---|---|---|---|

OpenAI (ChatGPT API) | LLM content generation & embeddings | Y | Fine-tuning available; permissions via app layer | Integrates via API with CMS/plugins | Usage-based / pay-as-you-go |

Anthropic Claude | Safety-focused LLM for drafts & assistants | Y | Enterprise fine-tuning; admin controls for teams | API connectors and third-party integrations | Usage-based / enterprise options |

Jasper.ai | Marketing-first content generation | Y | Template controls; team roles and review workflows | WordPress, HubSpot plugins, calendar exports | Freemium → subscription plans |

HubSpot (AI tools) | Content strategy + CRM-tied content | Y | Role permissions within HubSpot; some content controls | Native calendar, CMS, and CRM workflows | Included in HubSpot Marketing tiers |

Copy.ai | Quick marketing copy and templates | Y | Basic team roles; limited fine-tuning | Zapier, CMS plugins | Freemium → paid monthly plans |

Writesonic | Scalable content drafts and ads | Y | Project-level roles; tone controls | Zapier, CMS integrations | Freemium → paid tiers |

SurferSEO | SEO-driven content optimization | Y | Content grading and editorial suggestions; team access | WordPress, Google Docs integrations | Subscription-based |

Contentful | Headless CMS / orchestration | Y | Granular role permissions; workflow APIs | Native scheduling, supports calendar sync | Free tier → paid enterprise plans |

Custom in-house model | Tailored generation and controls | Y (internal) | Fully customizable fine-tuning and ACLs | Built to fit existing CMS/calendar | Variable: infra + engineering costs |

Choosing between these often comes down to integration cost versus immediate capability.

If you need rapid time savings on publishing, favor tools with native CMS/calendar hooks and role-based editorial controls.

If your team is technical and needs unique model behavior, a custom model or API-first LLMs will win long-term despite higher setup. For many teams, orchestration plus automation delivers the largest efficiency gains; consider platforms that combine drafting and scheduling, or pair a generator with a headless CMS. Tools such as Scaleblogger are examples of end-to-end automation that remove much of the manual handoff between draft and publish.

Match the tool to the weakest link in your workflow rather than buying the flashiest capability.

That choice saves time now and reduces technical debt later.

Implementation roadmap and pilot structure

Expect measurable movement within 90 days.

Companies using AI-powered content tools reported a 56% increase in engagement in 2025, so a tight pilot with clear goals can quickly prove value or reveal gaps.

This section gives a practical 90-day plan with milestones and success criteria, a cross-functional launch checklist, and a compact evaluation template for go/no-go decisions.

Each element is written so product, editorial, and analytics teams can run it without hand-holding.

Treat the pilot as an experiment: limit scope, instrument everything, and agree upfront what “success” looks like.

Below are step-by-step actions and the decision logic teams need to scale confidently.

90-day pilot plan with milestones and success criteria

Day 0–7: Setup and alignment

Confirm scope, KPI owners, and baseline metrics. Milestones: content calendar for 6 pieces, data pipeline to GA4, access to content generation tool (e.g., Jasper.ai). Success criteria: baseline traffic and engagement recorded; stakeholders signed off.

Week 2–4: MVP content production and publish

Produce the minimum viable content set and publish on a controlled channel. Milestones: 6 published pieces, one atomized social post per article. Success criteria: publish cadence met; editorial QA pass rate ≥ 95%.

Week 5–8: Measure, iterate, and personalize

Use Google Analytics 4 predictive metrics and on-page signals to refine topics and headlines. Milestones: two iterative rewrites, one personalization test segment. Success criteria: engagement lift ≥ 10% vs baseline or time-on-page lift ≥ 15%.

Week 9–12: Scale-readiness and handoff decision

Run a short A/B test on distribution and measure ROI signals. Milestones: distribution test complete, ROI model populated. Success criteria: cost per lead/content ≤ target OR engagement improvement aligned with business goals.

Cross-functional checklist for launch readiness

Prepare each area before Day 0. Use this as a launch gate.

Product & Scope: Clear pilot scope and prioritized content themes identified.

Editorial: Style guide, prompts, and approval SLAs documented.

Engineering: Tracking events mapped to

GA4with test data flowing.Analytics: Dashboard templates and alert thresholds ready.

Legal/Comms: IP and disclosure checks completed.

Ops & Scheduling: CMS publish access and social repurposing slots booked.

Pilot evaluation template: decision points to scale

Start with agreed thresholds and revisit them at Day 45 and Day 90.

Engagement threshold met?

Yes: proceed to distribution scale. No: diagnose whether content, audience, or distribution caused the shortfall.

Cost / ROI acceptable?

Yes: commit budget for month-on-month scale. No: test lower-cost formats or narrower audience segments.

Process reliability achieved?

Yes: document workflow and hand off to steady-state team. No: fix bottlenecks in approvals, tooling, or data flows.

65% — of companies reported improved ROI from AI in 2026, making the scaling decision as much about process as tech.

Run the pilot like an audit and a sprint.

Prove impact, then expand methodically — not by hoping, but by following the numbers.

Measurement, KPIs, and benchmarking

Measurement separates hopeful projects from repeatable programs. Build KPIs that map to visibility, engagement, and efficiency, then make them hard to argue with by tying each to a single measurement method and a fixed time window.

Start with clear baselines and pilot targets that your analytics stack can actually prove.

Use GA4 for session and engagement metrics, Search Console for SERP CTR, and internal time-tracking for production throughput.

Make reporting simple enough for stakeholders to scan, and rigorous enough for engineers to test against. Visibility: The volume and quality of discoverable content that brings new and returning users to your site via organic search and referrals. Engagement: The depth of interactions once a reader arrives — time on page, scroll depth, comments, and on-page conversions. Efficiency: The throughput and cost of producing content — hours per asset, assets per month, and cost-per-published-piece. How teams typically measure these in pilots:

Baseline snapshots: capture 8–12 weeks of pre-pilot data.

Pilot targets: set realistic percent improvements (not guesses).

Single source of truth: designate GA4/Search Console/CMS as canonical.

Cadence: report weekly for ops, monthly for leadership.

Benchmark table: sample KPIs before and after AI adoption

Metric | Baseline (typical) | Pilot target | Measurement method | Time window |

|---|---|---|---|---|

Organic sessions | 10,000 / month | 13,000 / month (+30%) | GA4 — Organic sessions | 3 months |

Average time on page | 90 s | 120 s (+33%) | GA4 — Average engagement time | 3 months |

Content production time per asset | 8 hours | 3.5 hours (−56%) | Time-tracking (Asana/Clockify) | 2 months |

Publishing frequency | 2 posts / week | 4 posts / week | CMS publish logs | 3 months |

CTR from SERP | 2.5% | 3.5% (+40%) | Google Search Console — CTR | 3 months |

On-page conversion rate | 1.2% | 1.8% (+50%) | GA4 — Goal/conversion events | 3 months |

Social shares per article | 30 | 60 (+100%) | Social analytics (native + share widgets) | 3 months |

Content ROI (revenue per asset) | $500 | $900 (+80%) | Revenue attribution / GA4 ecom | 6 months |

These numbers are realistic pilot targets, not guaranteed outcomes.

Use them to set expectations and to size experiments.

Start attribution with small, controlled tests.

Holdout groups and UTM granularity will do most of the heavy lifting.

Run an experiment-first approach:

Create a holdout: keep 10–20% of topics off AI-assisted workflows as a control.

Tag everything: use consistent

UTMparameters and content IDs for every asset.Run parallel A/B tests: surface AI-assisted headlines or meta descriptions against human originals.

Measure incremental lift: compare control vs. treatment for visits, CTR, and conversions.

Model cross-channel effects: use time-series regression and multi-touch checks to identify spillover.

GA4’s predictive metrics and automated insights can speed diagnosis, while CRM touchpoints reveal downstream revenue impact.

Also remember that McKinsey found improved ROI in a majority of firms using AI in marketing in 2026, which reinforces measuring both short-term engagement and longer-term business outcomes.

Clear baselines, disciplined tagging, and simple lift tests are enough to isolate AI’s contribution to growth.

Keep the measurement plan tight and the reporting short so decisions stay fast and evidence-driven.

Governance, compliance, and quality control

Good governance turns AI from a risky gadget into predictable infrastructure.

Policies must be concrete: define what data models can see, who signs off on content, and how every generated claim will be traced back to its source.

Treat compliance as an operational system, not a one-time checklist.

That means policy documents, automated enforcement where possible, and continuous audit logs that reviewers can query.

Model provenance and editorial controls protect reputation and legal exposure.

Capture model versioning, prompt templates, and the evidence behind factual claims so every piece of content has an audit trail.

Policies: data privacy, copyright, and model provenance

Start with clear, short policy statements that content teams can apply day-to-day.

Policies should be legal-proofed and mapped to specific workflow gates (e.g., no-personal-data prompts, mandatory citation pass). Data privacy: Require removal or anonymization of any PII before it enters an LLM prompt.

Implement automated redaction in the ingestion pipeline and log a data_redaction_id for each draft. Copyright: Insist on source attribution for direct quotes and require a reuse license check when content is based on third‑party material.

Keep a source_license_record attached to each asset. Model provenance: Record model_version, prompt_template, temperature, and timestamp for every generation.

Store that metadata alongside generated drafts so reviewers can reproduce and trace outputs.

Editorial quality checklist and review cadence

A living editorial checklist prevents drift as models and goals change.

Make the checklist short, measurable, and machine-friendly so parts can be auto-validated before human review.

This walkthrough demonstrates an editor applying the checklist to an AI draft, flagging factual claims and inserting citations.

Watch for how the reviewer uses the provenance log to trace a disputed sentence back to the prompt.

Headline accuracy: Verify claims in headlines match the article body.

Fact citations: Every factual claim must have a verifiable citation or SME sign-off.

Tone and brand voice: Confirm brand language is applied consistently.

SEO integrity: Check primary keyword placement and canonical tags.

Legal flags: Ensure no protected IP or sensitive data slipped through.

Publish readiness: Confirm analytics tracking and tag configuration.

Review cadence (minimum):

Draft-level check within 24 hours of generation.

Editorial review and citation pass before scheduling.

SME validation for technical/legal topics within 48 hours.

Monthly content-sample audits to validate checklist efficacy.

Handling hallucinations and factual errors: process and tools

Expect hallucinations; plan for fast detection, rollback, and correction.

Combine automated detectors, human triage, and provenance-driven fixes.

Detect: Run automated claim-extraction and fact-checking against trusted knowledge bases.

Flag: Assign a

claim_idto suspect sentences and surface them in the editor dashboard.Triage: Human reviewer confirms falsehood, then selects action: edit, annotate, or retract.

Correct: Replace with verified wording and append sources; record

correction_id.Monitor: Use

Google Analytics 4and editorial KPIs to identify content performance anomalies that suggest factual problems.Audit: Quarterly provenance audits validate that

model_versionand prompt changes reduced hallucination rates.

Use tools that export metadata (some platforms and APIs provide model_version and prompt logging).

When possible, integrate an automated citation generator and a lightweight fact-check microservice into the publishing pipeline.

Good governance makes AI predictable and accountable.

When policy, provenance, and rigorous editorial discipline work together, quality becomes repeatable rather than accidental.

Scaling teams and processes

Scaling an AI-enabled content function means turning one-off wins into predictable operations.

Start by treating the pilot like a controlled experiment: define repeatable roles, a clear budget model, and tangible handoffs between strategy, editorial, and engineering teams. That discipline prevents the mess that follows when outputs grow faster than governance.

Once those building blocks exist, focus on people and measurement.

Train editors in prompt design and AI literacy so they can own quality without handing everything to engineers.

At the same time, set operational KPIs that track both output and content health — not just volume but accuracy, audience fit, and lifecycle cost.

This section gives a practical playbook for that transition: who to hire or re-role, where to place budget dollars, what training to run first, and the dozen metrics that matter as you scale.

From pilot to program: roles, budgets, and org alignment

Make three organizational shifts when moving from pilot to program: formalize ownership, create dedicated budget lines, and map repeatable handoffs across teams.

Define ownership: Assign a Content Program Lead to own roadmap and a ML Ops liaison for model/version decisions.

Create budget buckets: Allocate funds for software subscriptions, model costs, and human review.

Embed SLAs: Set handoff SLAs between strategy → editorial → production to avoid ad-hoc requests.

Governance seat at the table: Put legal or compliance on the steering committee for ongoing policy sign-off.

Content Program Lead: Owns KPIs, roadmap, and cross-functional coordination. ML Ops liaison: Manages model updates, data pipelines, and API costs.

Structure roles and budgets so scaling is operational, not heroic.

Training and upskilling editorial teams on prompt design and AI literacy

Training must be practical, hands-on, and iterated with real work.

Focus on prompt design, error modes, and critical review skills so editors can judge outputs, not merely copy-edit them.

Core prompt skills: One-hour workshops on

prompt templates, temperature, and instruction framing.Error recognition: Short sessions showing hallucinations, bias, and factual drift with examples.

Tool fluency: Walkthroughs for platforms your team uses (note: HubSpot launched AI content tools in 2025 that many teams adopted).

Peer review labs: Weekly lab where two editors evaluate model outputs and document fixes.

Pair each training module with a simple rubric that rates accuracy, tone, and publishability.

That rubric becomes your team’s common language.

Operational metrics to monitor as you scale

Metrics should balance efficiency, quality, and business impact.

Track leading indicators for problems before they compound.

Throughput: Published pieces/week: measures volume and bottlenecks.

Quality: Publish pass rate: percent of AI drafts needing minimal edits.

Cost: Cost per published piece: model/API + human review divided by output.

Engagement: Audience lift: pageviews, time-on-page, or conversion delta per piece.

Model health: Prompt success rate: percent of prompts producing acceptable outputs.

Risk: Issue incidence: number of factual errors or compliance flags per month.

Lifecycle: Content decay rate: percent of pieces requiring refresh within X months.

Remember to connect metrics to dollars: a recent 2026 study from McKinsey found 65% of companies reported improved ROI after integrating AI into marketing, which makes ROI tracking non-negotiable.

Scaling isn’t a finish line; it’s a continuous improvement loop. Keep roles clear, train the people doing the work, and watch the right metrics — and the program will stop being an experiment and start being predictable.

Case studies and applied examples

A recent 2026 industry analysis found that 65% of companies reported improved ROI after integrating AI into marketing workflows, and those gains are easiest to see in concrete projects.

These case examples show how teams move from pilot to repeatable outcomes without changing their brand voice or editorial standards.

Below are two grounded examples and a set of reproducible prompts and short API snippets you can copy into a pilot.

Each example focuses on process, control points, and the exact artifacts teams create so the work is repeatable.

Speeding up B2B long-form production — process and results

A typical B2B content team replaces first-draft writing and research chores with AI-assisted pipelines while keeping human editors in the loop. The goal: shrink the calendar from ideation-to-publish and increase throughput without sacrificing technical accuracy.

Define the brief and evidence requirements, then create a

content spectemplate editors approve.Use an AI draft step (tools like Jasper.ai) to generate a structured outline and a 1,000–1,500 word draft.

Run the draft through a domain-check pass (SME review + citation validation).

Edit for tone, add proprietary data or quotes, and finalize images & metadata.

Publish and feed performance signals back into the topic model.

Practical result: teams report much faster cycles and fewer rewrite loops when drafts follow the template above.

The human editor becomes a quality gate, not a keyboard bottleneck.

Improving organic visibility with AI-assisted topic clustering

When content feels scattered, clustering bridges gaps and exposes link-growth opportunities. HubSpot’s 2025 content strategy tools and Google Analytics 4’s automated insights (2025 updates) make clustering actionable by combining search intent, performance data, and audience signals.

Cluster mapping: extract core queries, group by intent, then map pillar and cluster pages.

Gap scoring: rank clusters by buyer-stage value and existing coverage.

Content assignment: align briefs to clusters and set KPI targets for each piece.

This method increases topical authority by ensuring every new article fits an existing cluster.

Reproducible prompts and short API examples

A reliable prompt pattern: give constraints, audience, and output format.

Use this template with most LLMs. Prompt for an outline: Write a detailed outline for a 1,500-word article about [TOPIC], audience: [JOB TITLE], include 5 H2s and suggested data sources. Prompt for first draft: Expand the outline into 1,200–1,500 words, keep tone technical but accessible, add two practical examples and one checklist. Short API example (pseudo-JSON):

json

{

"model": "x-large",

"prompt": "Create outline: [TOPIC] | audience: [JOB]",

"max_tokens": 1200,

"temperature": 0.2

}Use programmatic checks after generation: run an entity-extraction pass, verify citations, and tag drafts with cluster IDs for publishing pipelines.

These applied patterns make pilots repeatable and auditable, so teams scale without losing control.

Conclusion

Make AI an ROI engine, not a content machine

The single most important idea to carry forward is this: AI only moves the needle when it’s wired to a clear business metric.

Treat the technology like a production tool that answers a hypothesis — not a shortcut to publish more.That mindset was the through-line in the implementation roadmap and the case studies: teams that defined one measurable goal, tightened governance, and iterated fast stopped wasting output and started gaining impact.If you want a concrete next step, pick one underperforming content pillar and run a KPI-focused pilot this month — define a single success metric, map the workflow, and assign reviewers.

Use the pilot to test tooling, roles, and quality gates; capture baseline performance and compare after four weeks.

Tools like ScaleBlogger are options for automating parts of that pipeline, but the high-return move is simple: set one metric, act, and measure — can your next 30 days prove that AI improved attention, not just output?