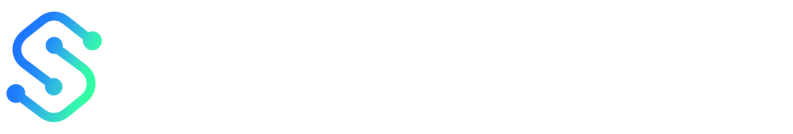

Technical SEO should be front and center of any content optimization strategy because it directly controls whether search engines can find, understand, and rank your pages. Implementing a focused technical SEO program improves crawlability, reduces page load times, and aligns site architecture with content intent, so your best content actually reaches target audiences. These fixes amplify the ROI of content creation by turning traffic potential into measurable visibility and conversions.

Industry teams that align technical SEO with content planning see faster rankings and sustained organic growth because technical barriers are removed before distribution. Picture a marketing team that cut average page load time from 4.2s to 1.8s and increased organic sessions by 28% after prioritizing `core web vitals`, structured data, and canonicalization. That kind of lift comes from treating website performance and indexability as content features, not separate backend chores.

Experts recommend integrating audits, monitoring, and automated fixes into editorial workflows so technical improvements scale with content velocity. This introduction previews practical steps, audit priorities, and automation patterns that make content optimization repeatable and measurable.

- How technical checks remove friction between content and indexing

- Which `core web vitals` and performance metrics matter for rankings

- How to embed technical SEO into editorial workflows using automation

- Common architecture issues that hide high-value content

Prioritizing technical SEO turns content investment into real search visibility.

What is Technical SEO and Why It Matters for Content

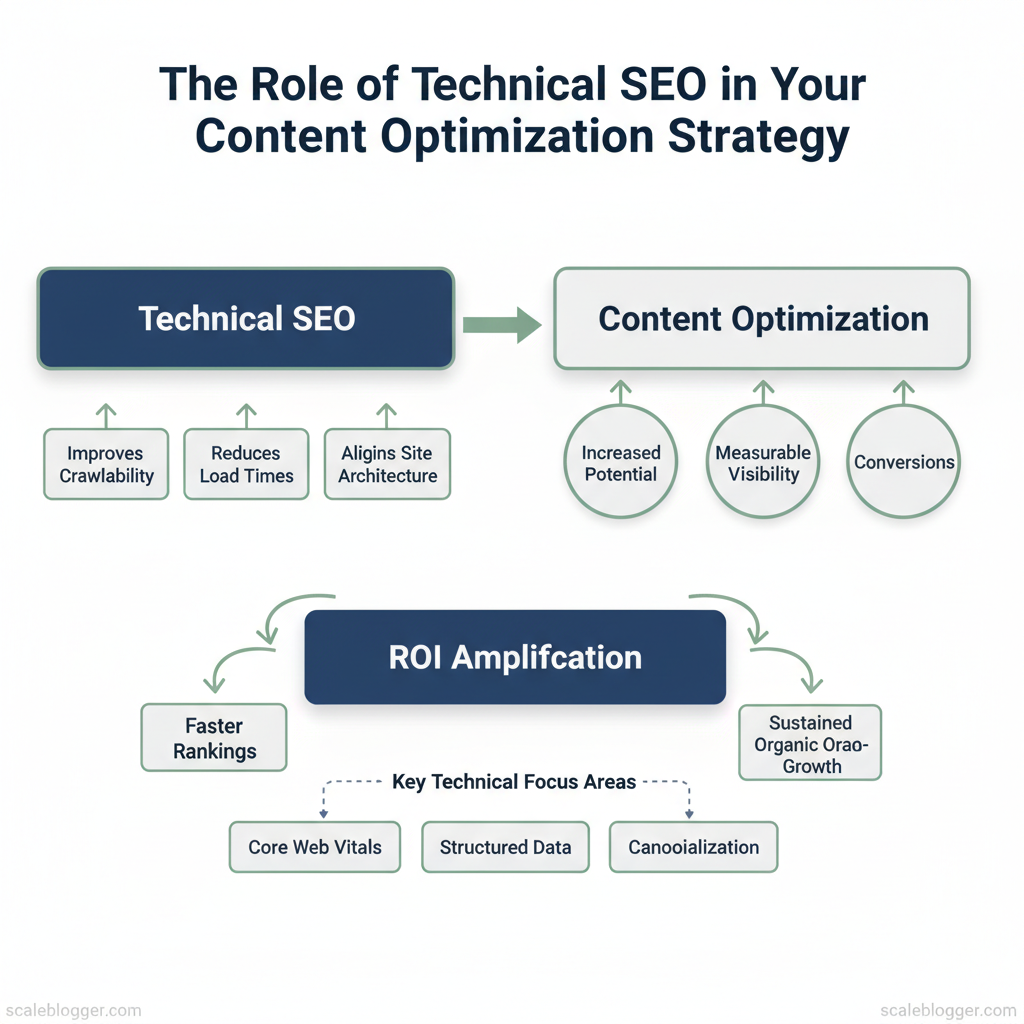

Technical SEO is the foundation that makes your content discoverable, fast, and interpretable by search engines. At its simplest, technical SEO covers the infrastructure-level rules and signals—crawlability, indexability, site structure, performance, and structured data—that determine whether search engines can find, render, and understand the pages you publish. Without those basics in place, even the best-written content can sit unseen or perform poorly because bots can’t reach it, pages load too slowly, or search engines misunderstand your page intent.

Why this matters for content: good technical SEO multiplies the value of each piece of content. It ensures topic clusters are surfaced correctly, improves user engagement through faster pages and stable layouts, and lets rich results (like FAQ snippets) enhance CTR. Fixing a technical bottleneck is often the fastest way to improve organic traffic for many pages because it removes systemic blockers that content edits alone can’t resolve.

Core components explained

How technical SEO differs from on-page/content SEO

Practical example: a strong, well-optimized pillar page may not rank if it’s blocked by a misconfigured `noindex` or loads in 8 seconds. Conversely, fixing indexability or improving Lighthouse scores often raises impressions site-wide without rewriting content.

This is why teams that pair content strategy with technical audits win faster—addressing infrastructure lets creators focus on quality rather than firefighting delivery problems. If you want to automate detection and prioritize fixes, tools and workflows that combine crawling, performance audits, and content intent mapping speed decisions and reduce manual work (for example, by integrating `AI content automation` into the pipeline).

| Technical Component | Why it matters for content | Quick fixes | Diagnostic tools |

|---|---|---|---|

| Crawlability | Ensures bots can reach pages; blocked pages won’t rank | Fix `robots.txt`, remove accidental `noindex`, submit sitemaps | Google Search Console, Screaming Frog, DeepCrawl |

| Indexability | Determines which pages appear in SERPs | Remove conflicting meta tags, canonicalize duplicates | Google Search Console URL Inspection, Bing Webmaster |

| Site architecture | Influences topical authority and link equity flow | Add hub pages, improve internal links, flatten hierarchy | Screaming Frog, Sitebulb, Ahrefs Site Audit |

| Page speed | Affects engagement and ranking signals (CWV) | Optimize images, enable compression, defer JS | Lighthouse, PageSpeed Insights, WebPageTest |

| Structured data | Enables rich results and better SERP presentation | Add `schema.org` markup for articles, FAQs | Rich Results Test, Schema.org validator, Structured Data Testing Tool |

Site Architecture, Crawlability & Indexing

Good site architecture makes pages discoverable and keeps search engines focused on your highest-value content. Start by treating crawlability as an operational constraint: prioritize what bots should index, make the path to important pages short, and use sitemaps/robots rules to guide crawlers rather than block them arbitrarily. That reduces wasted crawl budget and prevents index bloat while preserving link equity for product, category, and pillar pages.

Sitemaps, Robots.txt and Crawl Budget

- XML sitemap best practice: include canonical URLs, split >50k URLs into sitemap index files, update when content changes.

- Robots.txt formatting: use `User-agent`, `Allow`, `Disallow`, and absolute paths; test in Webmaster Tools.

- Crawl budget basics: relevant for sites >100k pages or heavy change frequency; reduce soft-404s and duplicate content.

| Element | Recommended configuration | Common mistakes | Validation tool |

|---|---|---|---|

| XML sitemap | Submit sitemap index, list canonical URLs, max 50k per file | Including non-canonical/404 URLs | Screaming Frog, Google Search Console |

| Robots.txt | `User-agent: *` rules, allow CSS/JS, reference sitemap | Blocking assets or sitemap, malformed syntax | Google Search Console tester |

| Noindex/nofollow use | Use `noindex` for thin pages; keep links follow unless truly isolated | Blocking via `robots.txt` instead of `noindex` (hides validation) | Sitebulb, Bing Webmaster Tools |

| Pagination and canonicalization | Canonical to main list page, rel=”next/prev” optional | Canonical loops, self-referencing wrong pages | Screaming Frog, manual crawl |

| Large faceted navigation | Parameter handling, disallow low-value query strings | Indexing millions of filter combos | Google Search Console URL Parameters |

Example `robots.txt` snippet: “`txt User-agent: * Allow: /assets/ Disallow: /admin/ Sitemap: /sitemap_index.xml “`

URL Structure, Redirects and Canonicals

Understanding these architecture patterns helps teams make faster editorial and engineering decisions while keeping search engines focused on the pages that matter. When implemented correctly, this reduces wasted crawling and maintenance time so creators can concentrate on content that drives traffic.

Performance Optimization & Core Web Vitals

Fast, reliable pages start with a diagnostic-first approach: run targeted audits, fix the highest-impact issues first, then iterate on server and delivery optimizations. Start by measuring LCP, FID (or `INP`), and CLS with tools like Lighthouse, WebPageTest, and Chrome UX Report; interpret results by focusing on the largest contentful paint element, interactive blocking scripts, and layout-shift culprits. From there, prioritize fixes that reduce load time and interaction delay for the majority of users.

How to diagnose and act

Platform-specific tips

- WordPress: use a well-coded cache plugin (WP Rocket or equivalent), combine with a CDN and image optimization plugin.

- Headless/Static: leverage build-time image transforms and edge CDNs (Netlify, Vercel) to deliver pre-rendered HTML.

- E-commerce/Complex: consider server-side rendering and selective hydration for product lists.

- TTFB matters: slow TTFB signals server capacity, database slowness, or poor edge routing; median TTFB under 200ms is a practical target for SEO-sensitive sites.

- When to upgrade or add a CDN: upgrade when average concurrency or TTFB remains high after caching; add a CDN immediately if you have global users or burst traffic.

- HTTP/2 and HTTP/3 benefits: multiplexing, header compression, and connection reuse from HTTP/2 reduce latency; HTTP/3 (QUIC) further improves loss recovery and connection setup on lossy mobile networks.

quick Lighthouse run and save report

lighthouse https://example.com –output html –output-path ./report.html –only-categories=performance “` Pairing diagnostic runs with server metrics (CPU, queue length, DB slow queries) guides whether optimization is front-end only or requires hosting upgrades. For content teams wanting repeatable publishing-to-performance workflows, tools that auto-optimize images, enforce critical CSS, and benchmark content changes can save weeks of manual effort — consider integrating an automation layer to scale these tasks.| Hosting type | Best for | Average cost range | Performance considerations |

|---|---|---|---|

| Shared hosting | Small blogs, low traffic | $2–$15/month | Low cost, variable TTFB, limited resources |

| Managed WordPress | SMBs using WP, easy ops | $20–$100/month | Optimized stack, built-in caching, CDN add-ons |

| VPS / Cloud (e.g., AWS Lightsail, DigitalOcean) | Growing sites, developers | $5–$80/month | Scalable CPU/RAM, requires ops, better TTFB control |

| Headless/Static hosting with CDN (Netlify/Vercel) | Marketing sites, docs | Free–$50+/month | Instant CDN edge, pre-rendering, fast global delivery |

| Enterprise / Dedicated | High-traffic platforms | $200–$2000+/month | Dedicated resources, custom caching, SLAs, advanced DDoS protection |

Understanding these principles helps you choose the right fixes and infrastructure investments so pages feel fast for real users, not just lab tests. When teams combine targeted diagnostics with sensible hosting and delivery choices, performance becomes predictable rather than reactive.

Indexing Controls, Metadata & Structured Data

Start by treating metadata, canonicals, and structured data as the control layer that tells search engines what to index, how to interpret content, and where to show rich results. Properly optimized meta tags increase click-through rates; canonical tags stop duplicate-content dilution; and structured data unlocks rich snippets like FAQs, how-tos, and video players. Together they reduce guesswork for crawlers and give your pages the best chance to rank and convert.

Meta tags: titles and descriptions that earn clicks

- Title tags: Keep them concise, include primary intent keyword early, and use brand sparingly. Aim for ~50–60 characters and prioritize unique messaging per page.

- Meta descriptions: Use persuasive language, include an action or value prop, and keep under ~155–160 characters for desktop visibility. Write one for humans, not just search engines.

- Practical tip: Use A/B tests on title and description pairs to measure CTR lift; track impressions → CTR → sessions in your analytics.

Canonicals and pagination: stop duplicate content from spreading authority

- Canonical tags (`rel=”canonical”`): Point duplicate or near-duplicate pages to the preferred URL to concentrate ranking signals. Avoid self-referencing mistakes on paginated sequences unless each page is unique.

- Paginated content: Use `rel=”prev/next”` where appropriate and include canonicalization strategies: either canonicalize paginated pages to themselves (if unique) or to the main hub page (if mostly duplicated).

- Warning: Canonical misuse can accidentally deindex pages—validate canonical chains before deployment.

Implementing Structured Data (Schema)

“`json “`json { “@context”: “https://schema.org” “@type”: “Article”, “headline”: “Indexing Controls, Metadata & Structured Data”, “author”: {“@type”:”Person”,”name”:”Author Name”}, “datePublished”: “2025-11-20”, “mainEntityOfPage”: {“@type”:”WebPage”,”@id”:”https://example.com/this-article”} } “` “`

| Schema Type | Best use case | SERP benefit | Implementation complexity |

|---|---|---|---|

| Article | News, long-form posts | Rich article result, enhanced display | Low — basic fields only |

| FAQ | Pages answering multiple questions | FAQ rich snippets in SERPs | Low — question/answer pairs |

| HowTo | Step-by-step guides | How-to rich result with steps | Medium — structured steps + images |

| Breadcrumb | Site hierarchical navigation | Breadcrumbs in SERPs for clarity | Low — list of items |

| VideoObject | Embedded/hosted videos | Video thumbnail & player in SERPs | Medium — duration, thumbnail, transcript |

Understanding these controls lets teams push accurate signals to search engines and prioritize development effort where it drives the most visible gains. When implemented correctly, this approach reduces manual rework and surfaces content more effectively in search.

Monitoring, Auditing and Ongoing Maintenance

Continuous monitoring keeps content visible and healthy; effective auditing turns signals into action. Start by centralizing telemetry (crawl data, speed metrics, server logs, schema reports) and schedule automated audits so issues surface before they cost traffic. Combine periodic human reviews with automated alerts: machines detect regressions at scale, humans prioritize nuance and intent alignment.

Tools, automated audits and checklist

- Consolidate telemetry: collect `Search Console` data, Lighthouse reports, crawl exports, and server logs in one dashboard.

- Audit cadence: run lightweight checks weekly, full technical audits monthly, and governance reviews quarterly.

- Checklist essentials: crawl coverage, indexation anomalies, canonical consistency, page speed, mobile UX, schema validity, redirect loops, and log-sampled bot behavior.

Interpreting audit results and prioritizing fixes Start with a simple scoring model: impact × effort → priority. Score each issue 1–5 for traffic impact and fix effort, then multiply. Issues scoring high impact/low effort jump to the top.

- High impact, low effort: fix missing meta descriptions on top 20 landing pages, correct broken internal links from popular hubs, implement `rel=canonical` on obvious duplicates.

- High impact, high effort: page template migrations, large-scale pagination fixes — plan across sprints.

- Low impact, low effort: small schema tweaks on low-traffic pages — batch into maintenance sprints.

Practical scoring template (copy into your issue tracker) “`yaml issue: “Missing meta on /product-x” traffic_impact: 4 effort: 1 priority_score: 4 sprint: 1 “`

| Tool | Core features | Best for | Cost |

|---|---|---|---|

| Google Search Console | Index coverage, performance, URL inspection | Indexing & queries | Free |

| Lighthouse / PageSpeed | Lab/field speed, accessibility, best practices | Page performance audits | Free |

| Screaming Frog | Site crawl, redirects, canonical checks | Deep technical crawls | £209/year (license) |

| Log File Analyzer | Bot behavior, crawl frequency, server status | Crawl budget analysis | Varies ($/month) |

| Sitebulb | Crawl + visual reports, CLI automation | Actionable technical reports | From $13/month |

| Ahrefs | Site audit, backlink & organic keywords | Competitive tech+link audits | From $99/month |

| SEMrush | Site audit, site health, crawlability | Integrated marketing + SEO | From $119.95/month |

| DeepCrawl | Enterprise crawling, custom rules | Large sites, enterprise scale | From $89/month |

| GTmetrix | Waterfall, performance scores | Front-end speed troubleshooting | Free / Pro tiers |

| Cloudflare Logs | Edge logs, performance/security events | CDN-level diagnostics | Free tier / paid logs |

If you want, I can convert the scoring template into a JIRA/Asana-ready export or help set up automated alerts that feed into your content pipeline using `AI content automation` from Scaleblogger.com. Understanding these practices helps teams move faster without sacrificing content quality.

📥 Download: Technical SEO Checklist for Content Optimization (PDF)

Integrating Technical SEO into Your Content Optimization Workflow

Integrate technical SEO early and continuously so fixes don’t become last‑minute firefights. Start technical checks at the content brief stage, enforce clear handoffs with ticketing, and monitor post‑publish signals so you can roll back or iterate fast. This keeps content teams productive while ensuring search engines can crawl, render, and rank pages as intended. Practical steps include a pre‑publish checklist that runs automated audits, a lightweight ticketing protocol for engineers, and a short post‑publish monitoring window (48–72 hours) that tracks indexation, crawl errors, and Core Web Vitals trends. When teams adopt this flow, the number of regressions drops and measurable gains in impressions and click-through rate follow.

Workflow: From content brief to post-publish checks

- Pre-publish checklist: Run `URL` in Lighthouse, check canonical tags, verify structured data, confirm hreflang if applicable, and validate robots/meta directives.

- Handoff and ticketing: Create one ticket per technical task with clear acceptance criteria (device, URL, expected metric). Use labels like `tech-seo`, `critical`, `needs-qa`.

- Content engineering playbook: Keep short templates for common fixes (canonicalization, lazy-load images, sitemap updates) to speed handoffs.

- Post-publish monitoring: Monitor Google Search Console for index coverage, real-time logs for 404 spikes, and Core Web Vitals from Lighthouse or field data for regressions.

- Rollback triggers: Revert or unpublish when traffic drops >30% for a page within 72 hours following a major deploy, or when LCP increases by >200ms on the majority of pageviews.

Measuring impact: KPIs, ROI and reporting

| KPI | Baseline (example) | Target | Measurement source |

|---|---|---|---|

| Organic impressions | 150,000/mo | 210,000/mo (+40%) | Google Search Console |

| Organic clicks | 9,000/mo | 12,600/mo (+40%) | Google Search Console |

| Average position | 27.3 | 18–20 | Google Search Console |

| Average session duration | 1m42s | 2m15s | Google Analytics |

| Core Web Vitals (LCP/CLS/INP) | 3.1s / 0.15 / 200ms | <2.5s / <0.1 / <150ms | Lighthouse / Field (CrUX) |

Understanding these principles helps teams move faster without sacrificing quality. When implemented consistently, this workflow reduces firefights and lets creators focus on content that drives growth.

Conclusion

Bringing technical SEO into the heart of your content process pays off: pages get crawled faster, intent aligns with structure, and content teams stop guessing which fixes move the needle. From aligning URL structures to automating schema and crawl monitoring, focus on a handful of repeatable steps and you’ll see steady improvements. Consider these practical next moves: – Audit crawlability and fix blocked resources or redirect chains. – Automate schema and metadata so every new page ships with search-ready markup. – Measure impact weekly with rank and crawl-error dashboards to spot regressions early.

Teams that treated technical fixes as one-off projects saw inconsistent results; teams that baked automation into the workflow—using scheduled audits and templated metadata—saw clearer, faster gains in visibility and fewer production rollbacks. If you’re wondering whether to start small or overhaul everything, start with the highest-traffic templates and automate from there; that approach balances risk and reward while delivering quick wins.

If you want to streamline execution and reduce manual toil, consider platforms that plug into your CMS and monitoring tools. For teams looking to scale this reliably, Automate your content & technical SEO workflows with Scaleblogger is a practical next step—request a demo or trial to see how automation can free your team to focus on strategy.